Instance Segmentation

Function

Use the instance segmentation model package to run inference on the input image. The model package segments the contour of each target object and outputs class labels.

Applicable to scenarios that require accurate recognition and localization of individual objects, such as depalletizing, workpiece loading and unloading, and goods picking.

Input and Output

After you import the model package in the Deep Learning Model Package Inference Step, the following input and output ports are displayed.

Input

| Input Port | Data Type | Description |

|---|---|---|

Image |

Image/Color |

Image input to this port will be used for deep learning model package inference. Displays when the Input Data Type is 2D image. |

Surface Data |

Surface |

Surface data input to this port will be used for deep learning model package inference. Displays when the Input Data Type is Surface data. |

Output

| Output Port | Data Type | Description |

|---|---|---|

Visualization Output |

Image/Color |

Visualized results. |

Instance Masks |

Image/Color/Mask[] |

Masks of detected target objects. Regions with non-zero pixel values represent the mask. The mask contour is the contour of the target object. This port is displayed when the Input Data Type is 2D image. |

Instance Bounding Boxes |

Shape2D/Contour[] |

Bounding box of the detected target object. This port is displayed when the Input Data Type is 2D image. |

Instance Bounding Box Masks |

Image/Color/Mask[] |

Square mask of the bounding box of the object. Regions with non-zero pixel values represent the mask. This port is displayed when the Input Data Type is 2D image. |

Instance Surface Data |

Surface[] |

Detected surface data of the target instance. This port is displayed when the Input Data Type is Surface data. |

Surface Data in Bounding Box |

Surface[] |

The rectangular surface data within the instance bounding box. This port is displayed when the Input Data Type is Surface data. |

Instance Confidences |

Number[] |

Confidence of detected objects. |

Instance Labels |

String[] |

Object labels. |

Parameter Description

The following parameters need to be adjusted when the instance segmentation model package is imported into this Step.

Model Package Settings

| Parameter | Description |

|---|---|

Model Manager Tool |

Parameter description: This parameter is used to open the deep learning model package management tool and import the deep learning model package. The model package file is a .dlkpack file exported by Mech-DLK.

|

Model Name |

Parameter description: After a Deep Learning Model Package is imported, this parameter is used to select the imported model package for this step.

|

Release Original Model Package After Switching |

Parameter description: Controls whether the resources used by the original model package are released upon the switch.

|

Model Package Type |

Parameter description: Once a Model Name is selected, the Model Package Type will be filled automatically. |

Input Batch Size |

Parameter description: The number of images processed during each inference. |

GPU ID |

Parameter description: This parameter is used to select the device ID of the GPU that will be used for the inference.

|

Input Data Type |

Parameter description: This parameter is used to specify the type of input data. The corresponding input ports will be displayed after the parameter is selected. It supports 2D image and surface data input. |

Preprocessing

| Parameter | Description | ||||

|---|---|---|---|---|---|

ROI File |

Parameter description: This parameter is used to set or modify the ROI of the input image. Tuning instruction: Once the deep learning model is imported, a default ROI will be applied. If you need to edit the ROI, click the Open the editor button. Edit the ROI in the pop-up Set ROI window, and fill in the ROI name. Instructions for Setting ROI: Hold down the left mouse button and drag to select an ROI, and then click the left mouse button again to confirm. If you need to re-select the ROI, please click the left mouse button and drag again. The coordinates of the selected ROI will be displayed in the “ROI Properties” section. Click the OK button to save and exit.

|

Postprocessing

| Parameter | Description | ||

|---|---|---|---|

Inference Configuration |

Parameter description: Configures the inference settings for an Instance Segmentation model package. Click Open the editor to open the inference configuration window. Instruction: Refer to Inference Configuration Tool for detailed parameter description.

|

||

Class Display Mode |

Parameter description: Selects whether to display classes by name or by index in the output results. |

Visualization Settings

| Parameter | Description |

|---|---|

Draw Result on Image |

Parameter description: Once enabled, the detection results will be displayed on the image.

|

Visualization Method for Results |

Parameter description: This parameter is used to specify the way to visualize the output results.

|

Customize Font Size |

Parameter description: This parameter determines whether to customize the font size in the visualized outputs. Once this option is selected, you should set the Font Size (0–10). The default value is 1.5.

|

Tuning Examples

Visualization Method for Results

| Visualization Method for Results | Description | Illustration |

|---|---|---|

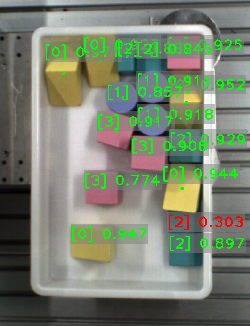

Display each instance |

Visualizes each instance with a unique color. |

|

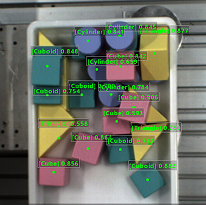

Display instances by class |

Visualizes instances by class, with instances of the same class sharing the same color. |

|

Display instance center points |

Visualizes instance center points, with the instance color related to Confidence threshold. |

|