Vision-Guided Single-Case Depalletizing

This tutorial introduces how to deploy a 3D vision–guided carton depalletizing application using the application template case of “Single-Case Depalletizing” in the Solution Library.

Application scenario: The 3D vision system guides the robot to pick single-case cartons from the pallet and place them on the conveyor line.

Application Overview

-

Target object: single-case cartons.

-

This application uses a real camera to capture images of cartons for target object recognition. If you want to use a virtual camera, please click here to download image data of the cartons.

-

This application uses deep learning to assist recognition. There is already a built-in deep learning model package in the

resource/dl_modeldirectory of this solution.

-

-

Camera: Mech-Eye DEEP camera, mounted in eye to hand (ETH) mode.

-

Calibration board: It is recommended to use the calibration board CGB-050.

-

Robot: a six-axis robot. This application uses ABB_IRB6700_150_3_20 as an example.

-

IPC: Mech-Mind IPC STD

-

Software: Mech-Vision & Mech-Viz 2.0.0, Mech-Eye Viewer 2.4.0

-

Communication solution: Standard Interface communication, in which the vision system outputs the path planned by the Mech-Viz software.

-

End tool: vacuum gripper

For this application, you are required to prepare a model file in .obj format for the vacuum gripper, which will be used for collision detection during path planning. You can download it by clicking here.

-

Scene object: scene object model

This application requires a scene model file in .stl format, which is used to simulate a real scene and is used for collision detection in path planning. You can download it by clicking here.

|

If you are using a different camera model, robot brand, or target object than in this example, please refer to the reference information provided in the corresponding steps to make adjustments. |

Deploy a Vision-Guided Robotic Application

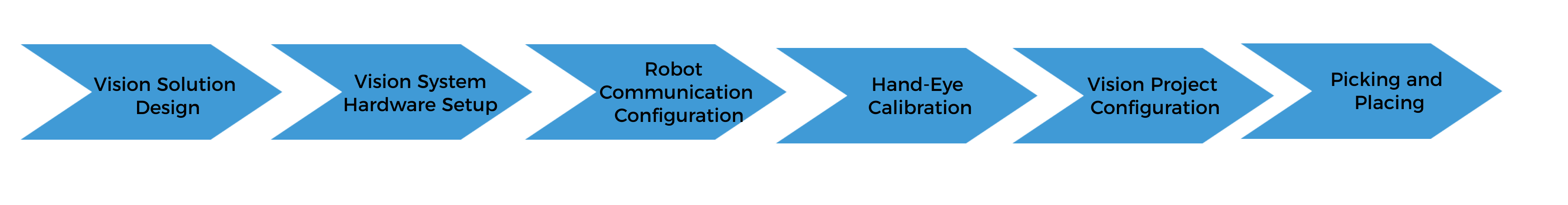

The deployment of the vision-guided robotic application can be divided into six phases, as shown in the figure below:

The following table describes the six phases of deploying a vision-guided robotic application.

| No. | Phase | Description |

|---|---|---|

1 |

Vision Solution Design |

Select the hardware model according to the project requirements, determine the mounting mode, vision processing method, etc. (This tutorial has a corresponding vision solution, and you do not need to design it yourself.) |

2 |

Install and connect hardware of the Mech-Mind Vision System. |

|

3 |

Loaded the robot Standard Interface program and the configuration files to the robot system to establish the Standard Interface communication between the Mech-Mind vision system and the robot. |

|

4 |

Perform the automatic hand-eye calibration in the eye-to-hand setup, to establish the transformation relationship between the camera reference frame and the robot reference frame. |

|

5 |

Use the application template “Single-Case Depalletizing” in Mech-Vision Solution Library and plan the robot path with the “Path Planning” Step. |

|

6 |

Based on the robot example program MM_S9_Viz_RunInAdvance, write a pick-and-place program suitable for on-site applications. |

Next, follow subsequent sections to complete the application deployment.