Use the Unsupervised Segmentation Module

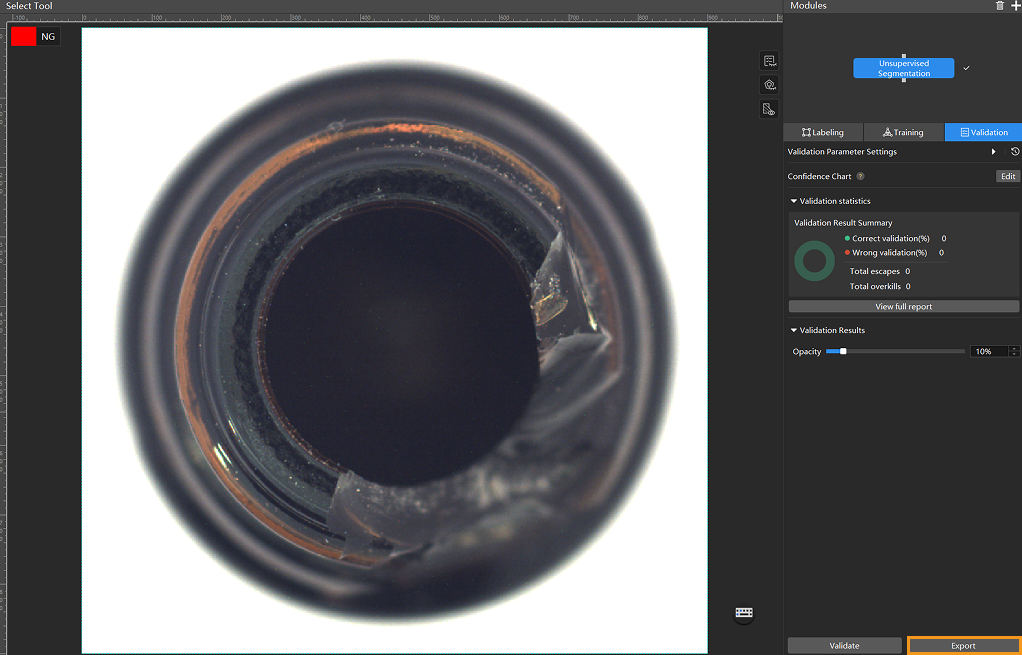

Using bottle-bottom data (click to download) as an example, this document demonstrates how to train a model with the Unsupervised Segmentation module to detect whether an image contains defects.

| You can also use your own data. The usage process is overall the same, but the labeling part is different. |

Preparations

-

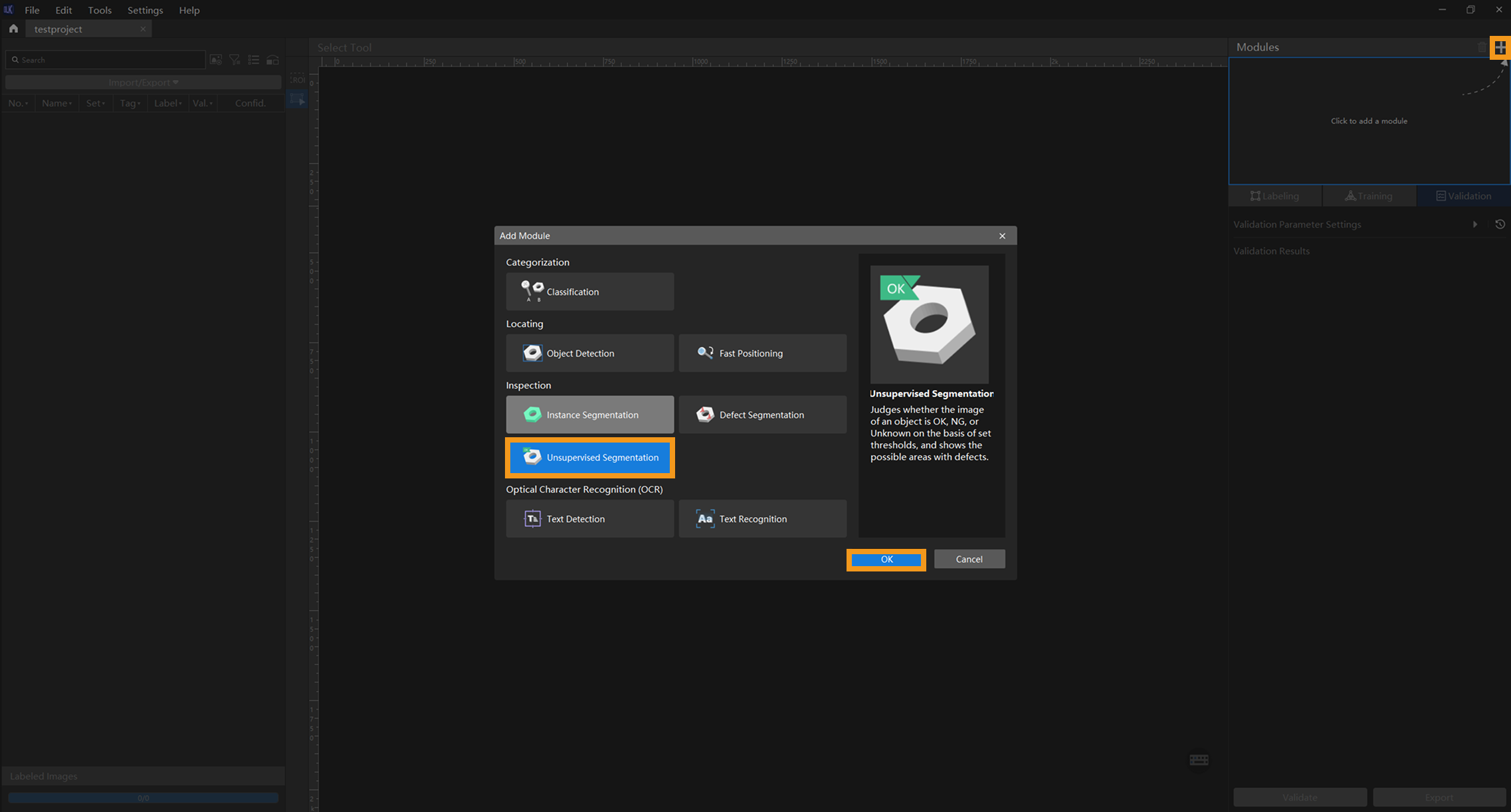

Create a new project and add the Unsupervised Segmentation module: Click New Project after you opened the software, name the project, and select a directory to save the project. Then, click

in the upper-right corner and add the Unsupervised Segmentation module.

in the upper-right corner and add the Unsupervised Segmentation module.If the images contain significant background interference, you can add the Object Detection module before training. If the objects in the images have varying orientations, you can add the Fast Positioning module beforehand. See the Use Cascaded Modules section for detailed instructions.

-

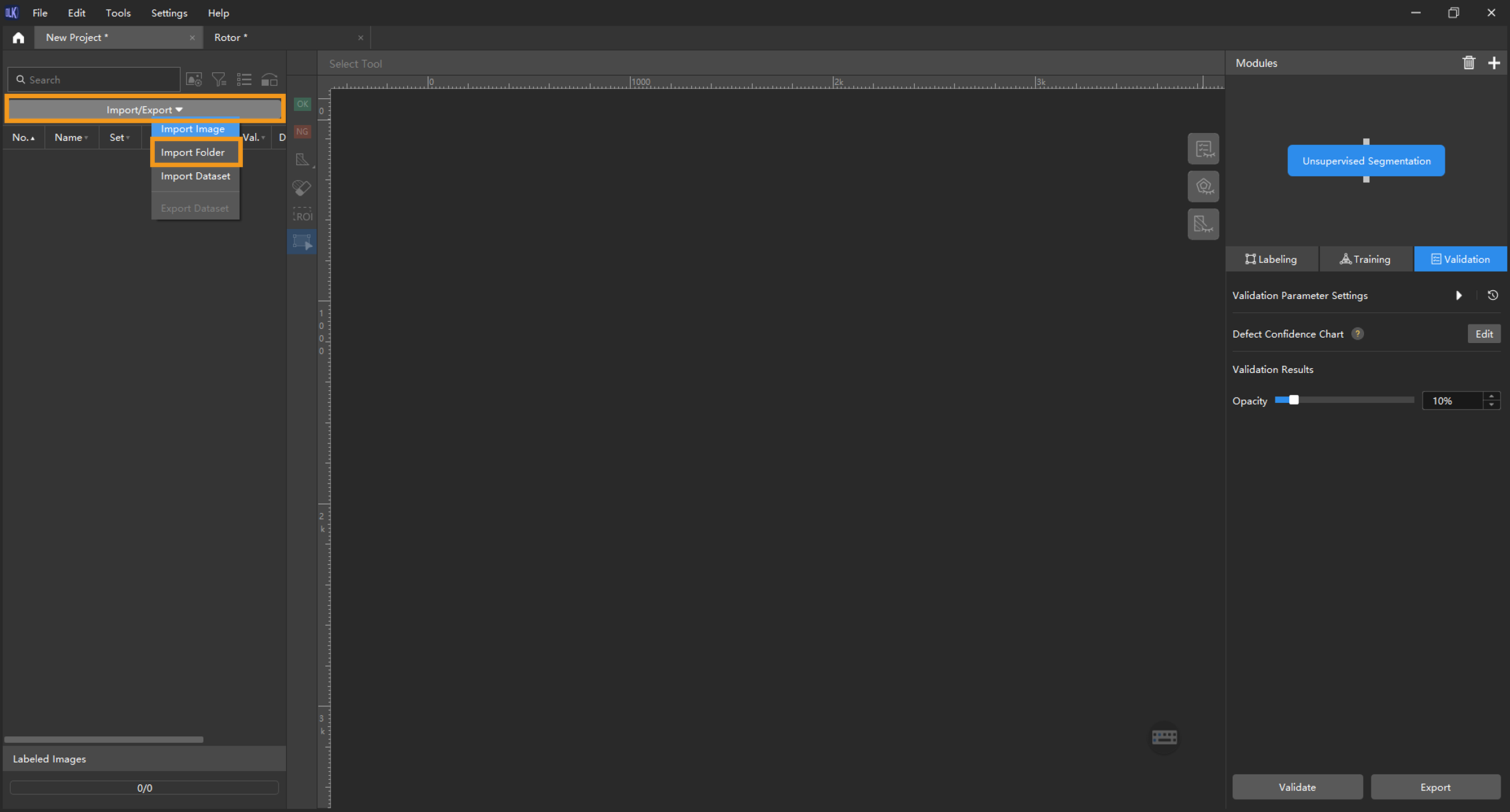

Import the image data of bottles: Unzip the downloaded data file. Click the Import/Export button in the upper left corner, select Import Folder, and import the image data.

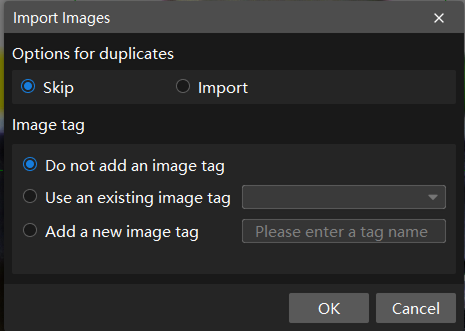

If duplicate images are detected in the image data, you can choose to skip, import, or set an tag for them in the pop-up Import Images dialog box. Since each image supports only one tag, adding a new tag to an already tagged image will overwrite the existing tag. When importing a dataset, you can choose whether to replace duplicate images.

-

Dialog box for the Import Images or Import Folder option:

-

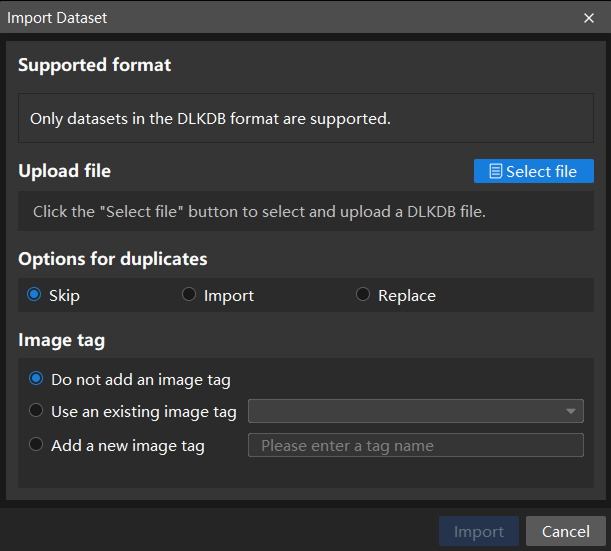

Dialog box for the Import Dataset option:

If you need to use your own data, you must ensure the image quality. Do not use images in which objects have different shapes and sizes and are in different positions, or images with changing backgrounds. Such situations will greatly impact model performance. Therefore, it is necessary to ensure the used OK images have small but important differences.

Most images Incorrect example

-

When you select Import Dataset, you can only import datasets in the DLKDB format (.dlkdb), which are datasets exported from Mech-DLK.

-

You do not need NG images to train a model, but it is recommended that you include some NG images in the validation set to improve the results produced by models.

-

-

-

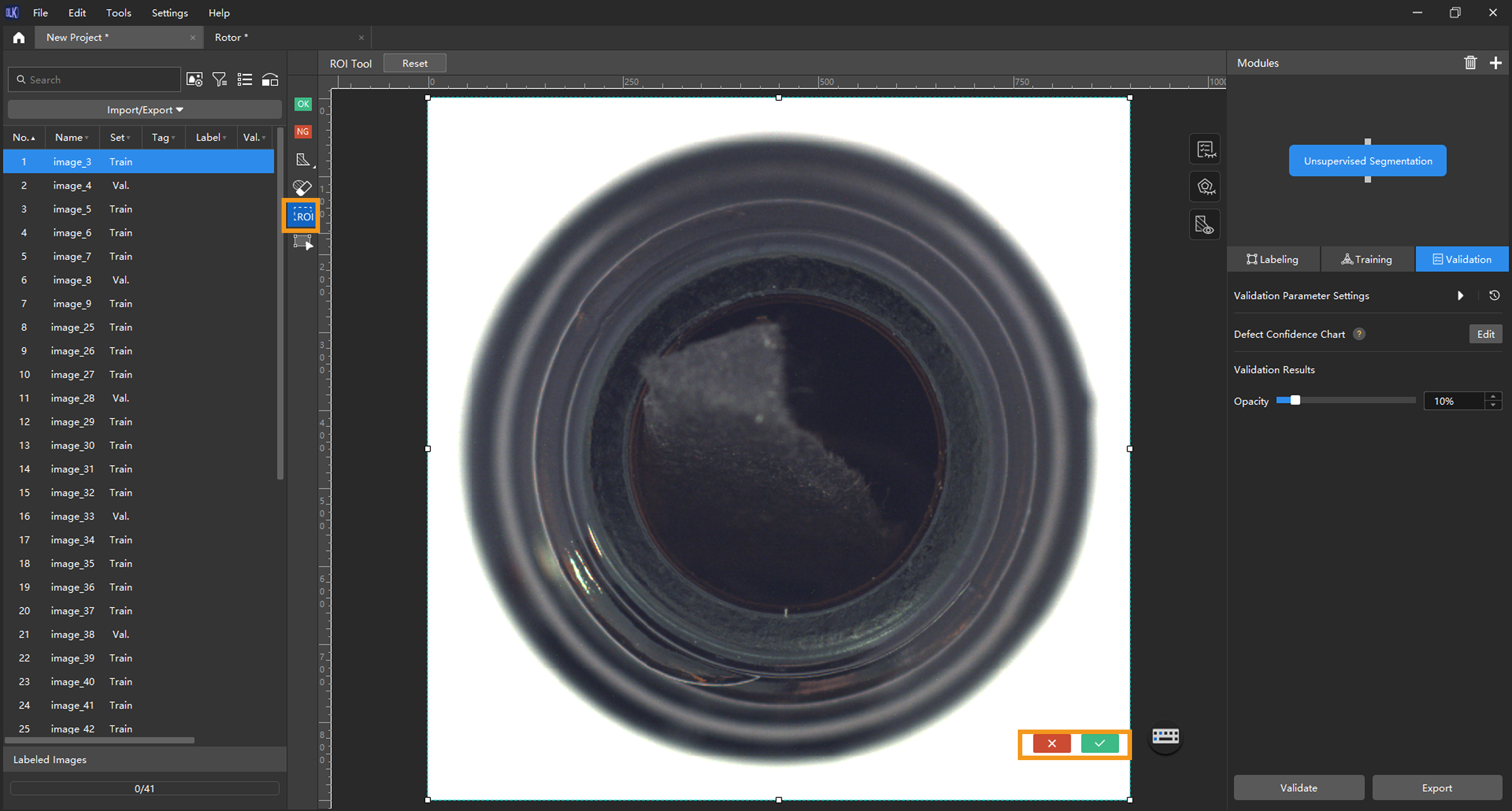

Select an ROI: Click the ROI Tool button

and adjust the frame to set an ROI that covers the target objects in the images. Then, click the

and adjust the frame to set an ROI that covers the target objects in the images. Then, click the  button in the lower right corner of the ROI to save the setting. Setting the ROI can avoid interferences from the background and reduce processing time, and the ROI boundary should be as close to the outer contours of the object as possible.

button in the lower right corner of the ROI to save the setting. Setting the ROI can avoid interferences from the background and reduce processing time, and the ROI boundary should be as close to the outer contours of the object as possible.The same ROI setting will be applied to all images, so it is necessary to ensure that objects in all images are within the set ROI.

-

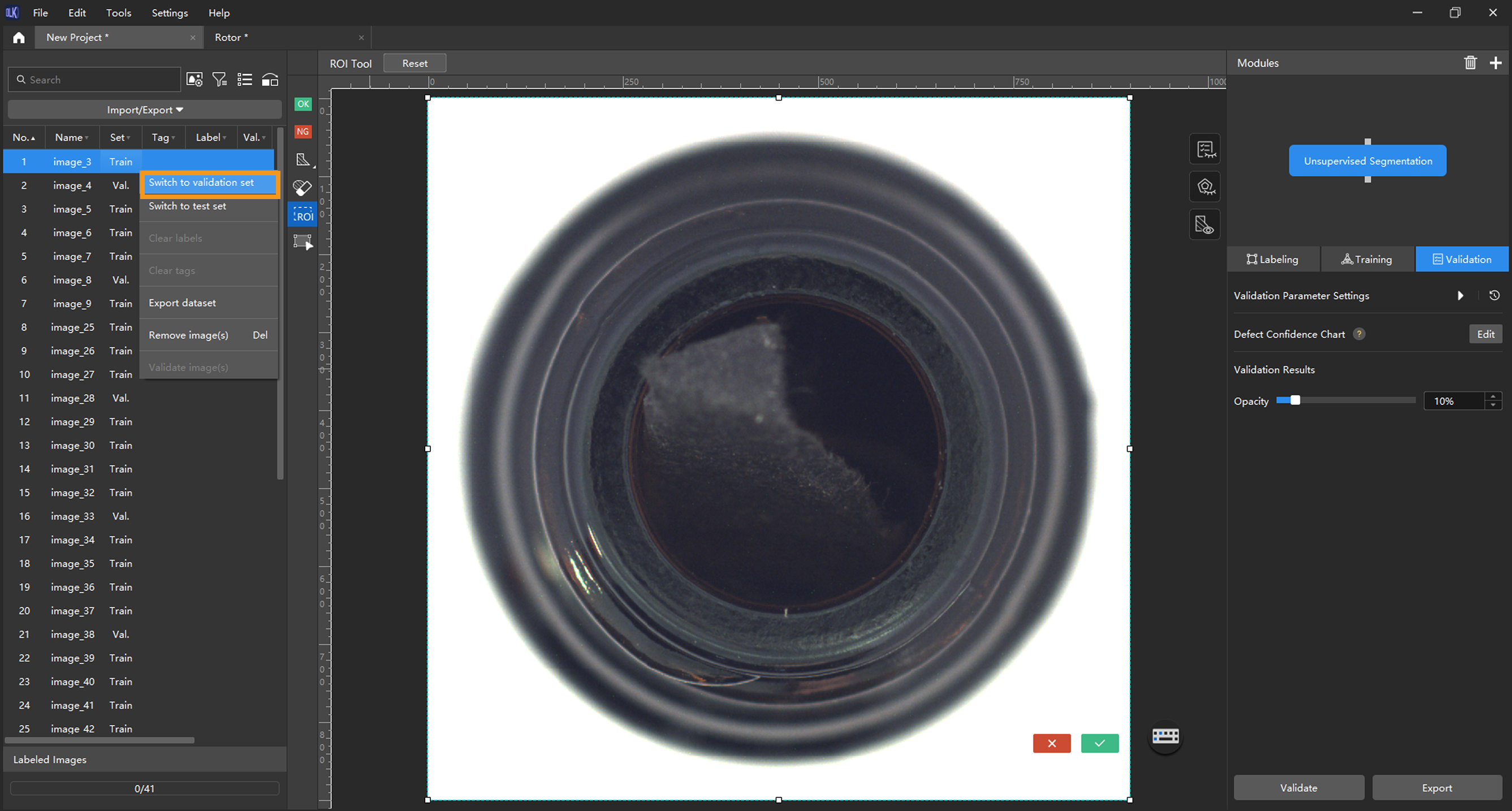

Split the dataset into the training set and validation set: By default, 80% of the images in the dataset will be split into the training set, and the rest 20% will be split into the validation set. You can click

and drag the slider to adjust the proportion.

and drag the slider to adjust the proportion.

Model Training

-

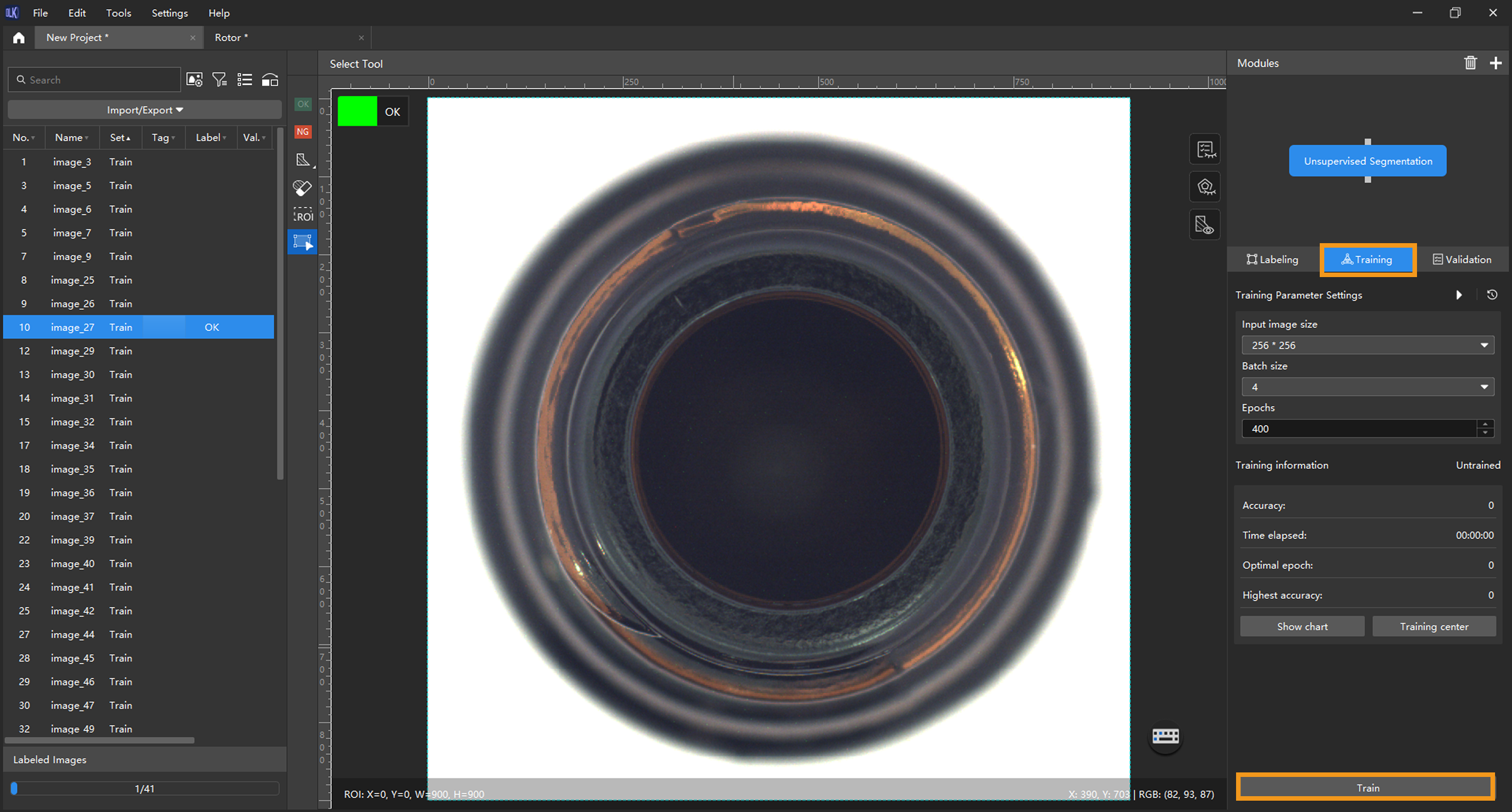

Train the model: Keep the default training parameter settings and click Train to start training the model.

If the training set contains images labeled as NG, these images will be automatically put into the validation set for validation during model training.

-

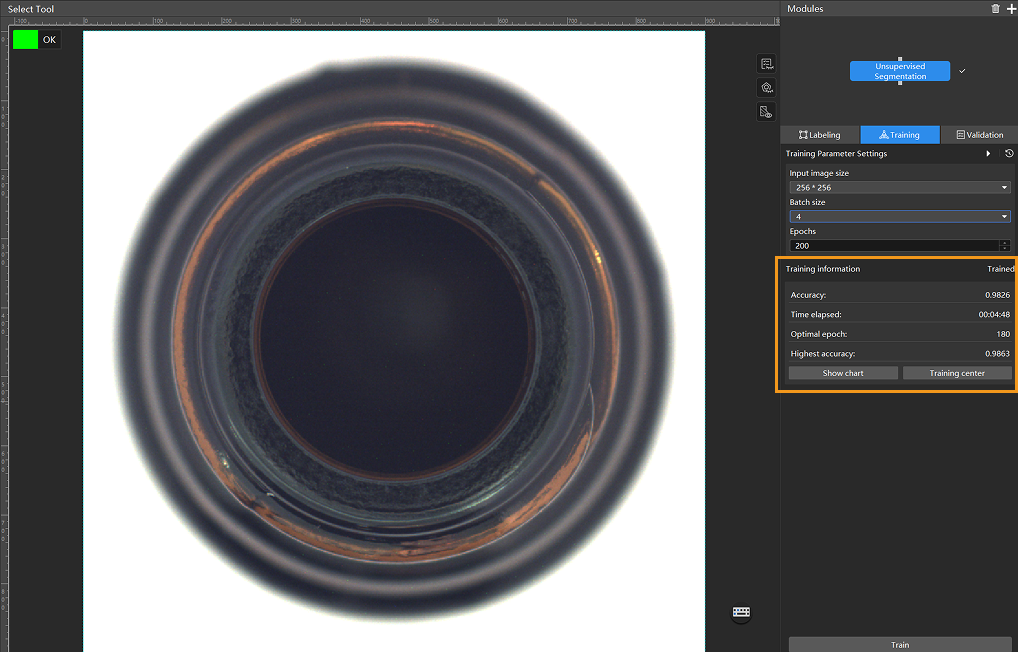

Monitor training progress through training information: On the Training tab, the training information panel allows you to view real-time model training details.

-

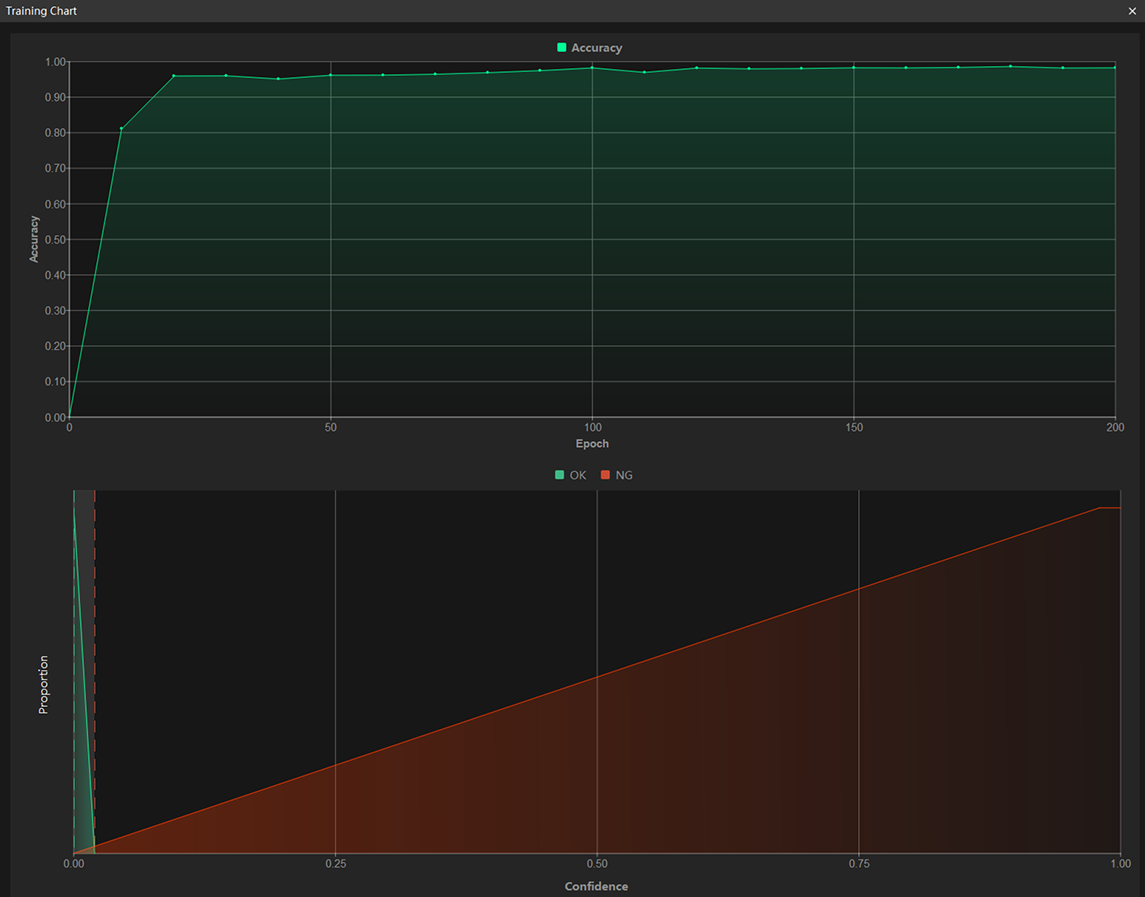

View training progress through the Training Chart window: Click the Show chart button under the Training tab to view real-time changes in the model’s accuracy and loss curves during training. An overall upward trend in the accuracy curve and a downward trend in the loss curve indicate that the current training is running properly.

-

Stop training early based on actual conditions (optional): When the model accuracy has met the requirements, you can save time by clicking the Training center button, selecting the project from the task list, and then clicking

to stop training. You can also wait for the model training to complete and observe parameter values such as the highest accuracy to make a preliminary assessment of the model’s performance.

to stop training. You can also wait for the model training to complete and observe parameter values such as the highest accuracy to make a preliminary assessment of the model’s performance.If the accuracy curve shows no upward trend after many epochs, it may indicate a problem with the current model training. Stop the model training process, check all parameter settings, examine the training set for missing or incorrect labels, correct them as needed, and then restart training.

Model Validation

-

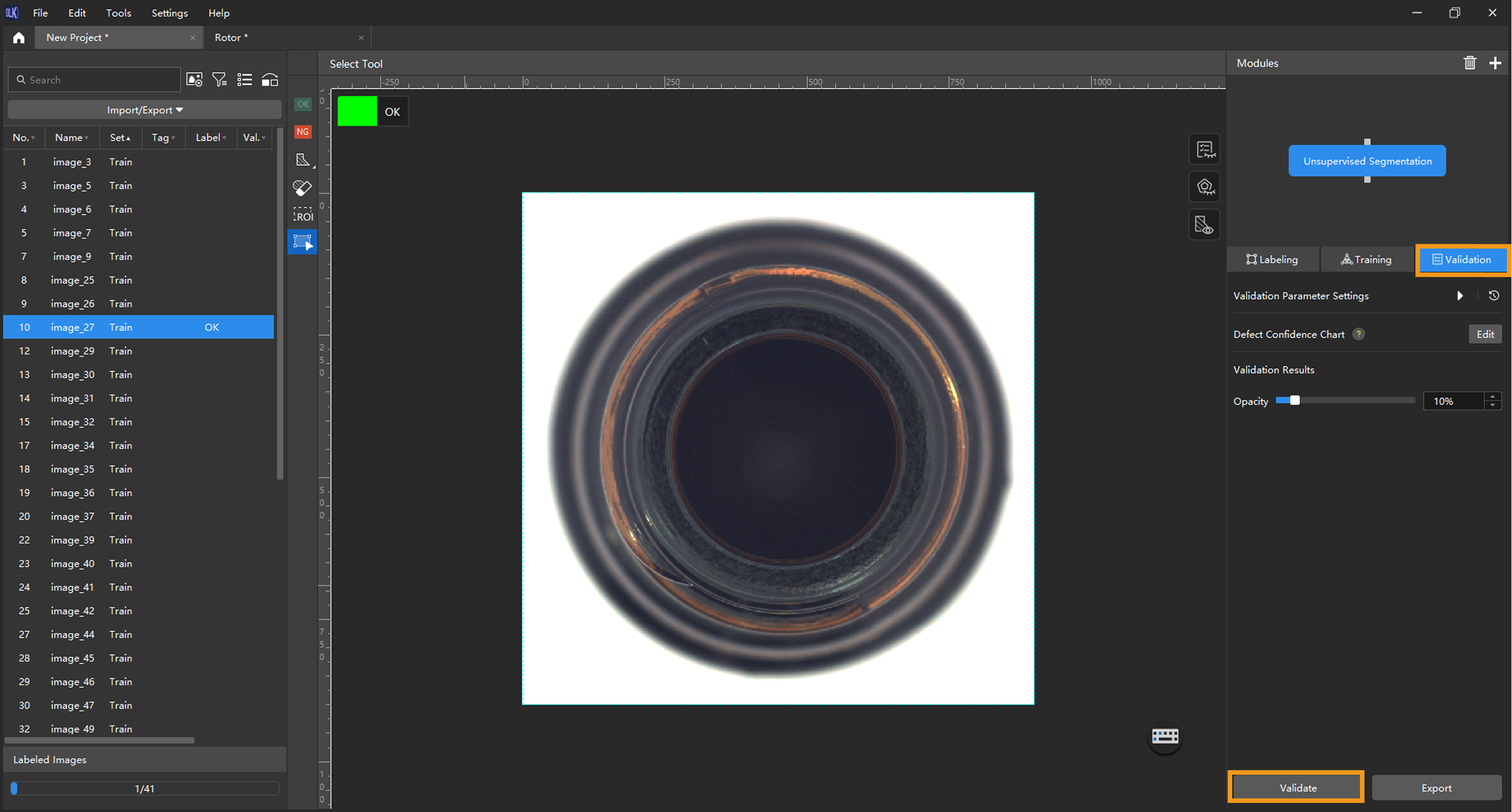

Validate the model: After the training is completed, click Validate to validate the model and check the results.

-

Check the model’s validation results in the training set: After validation is complete, you can view the validation result quantity statistics in the Validation statistics section under the Validation tab.

-

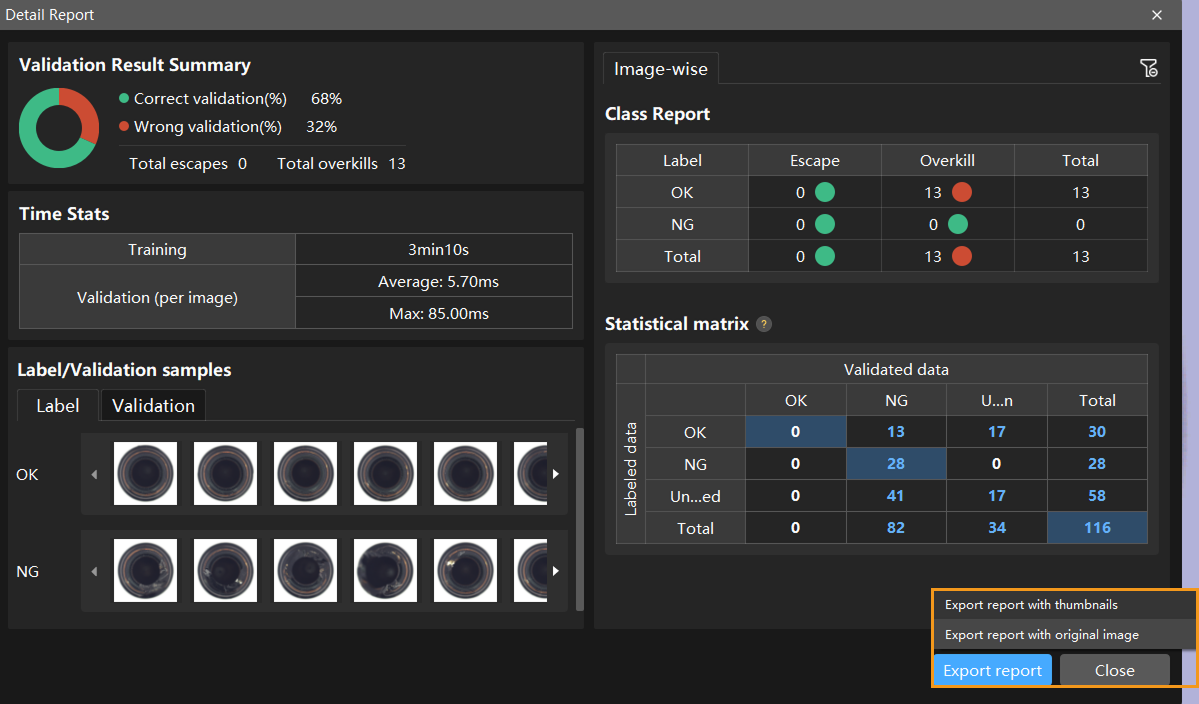

Click the View full report button to open the Detailed Report window and view detailed validation statistics.

-

The Statistical matrix in the report shows the correspondence between the validated data and labeled data of the model, allowing you to assess how well each class is matched by the model.

-

In the matrix, the vertical axis represents labeled data, and the horizontal axis represents predicted results. Blue cells indicate matches between predictions and labels, while the other cells represent mismatches, which can provide insights for model optimization.

-

Clicking a value in the matrix will automatically filter the image list in the main interface to display only the images corresponding to the selected value.

If the model training performance is unsatisfactory, please check the labels, adjust the training parameter settings, and restart the training. You can also click the Export report button at the bottom-right corner of the Detailed Report window to choose between exporting a thumbnail report or a full-image report.

-

-

Restart training: After adding newly labeled images to the training set, click the Train button to restart training.

-

Recheck model validation results: After training is complete, click the Validate button again to validate the model and review the validation results on each dataset.

-

Fine-tune the model (optional): You can enable developer mode and turn on Finetune in the Training Parameter Settings dialog box. For more information, see Iterate a Model.

-

Continuously optimize the model: Repeat the above steps to continuously improve model performance until it meets the requirements.

In the Validation tab, click Adjust thresholds, and in the pop-up dialog box, drag the vertical lines to adjust the thresholds. The green one is used to adjust the threshold of OK results, and the red one is used to adjust the threshold of NG results. Once the thresholds are adjusted, please re-validate the model.

Model Export

Export the model: Click Export and select a directory to save the trained model.

The exported model can be used in Mech-DLK SDK. Click here to view the details.