Use the Object Detection Module

This document provides rotor data (click to download) and guides you through using the Object Detection module to train a model that detects all rotor positions and outputs the rotor quantity.

| You can also use your own data. The usage process is overall the same, but the labeling part is different. |

Workflow

-

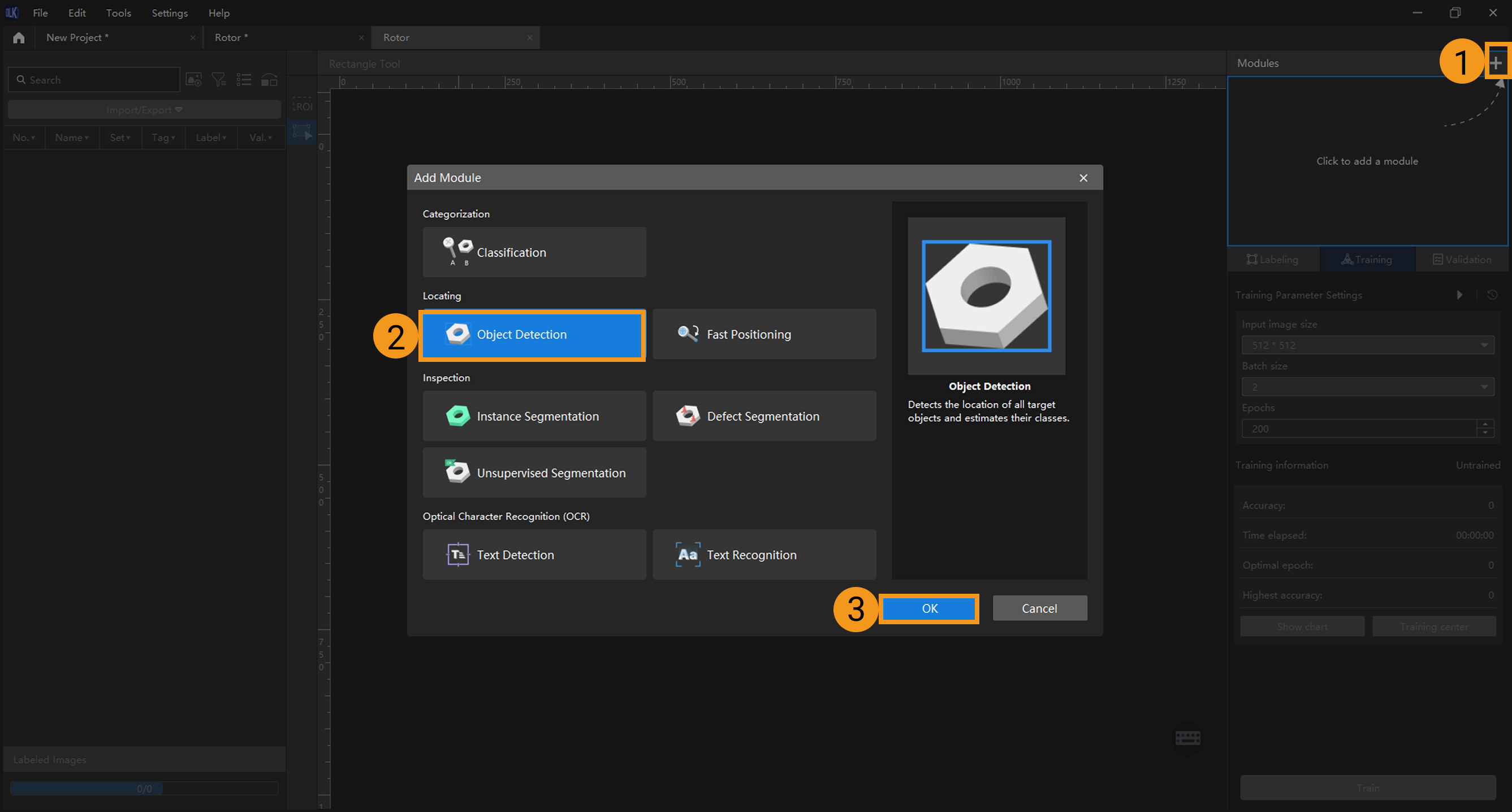

Create a new project and add the Object Detection module: Click New Project in the interface, name the project, and select a directory to save the project. Click

in the upper right corner of the Modules section and add the Object Detection module.

in the upper right corner of the Modules section and add the Object Detection module.

-

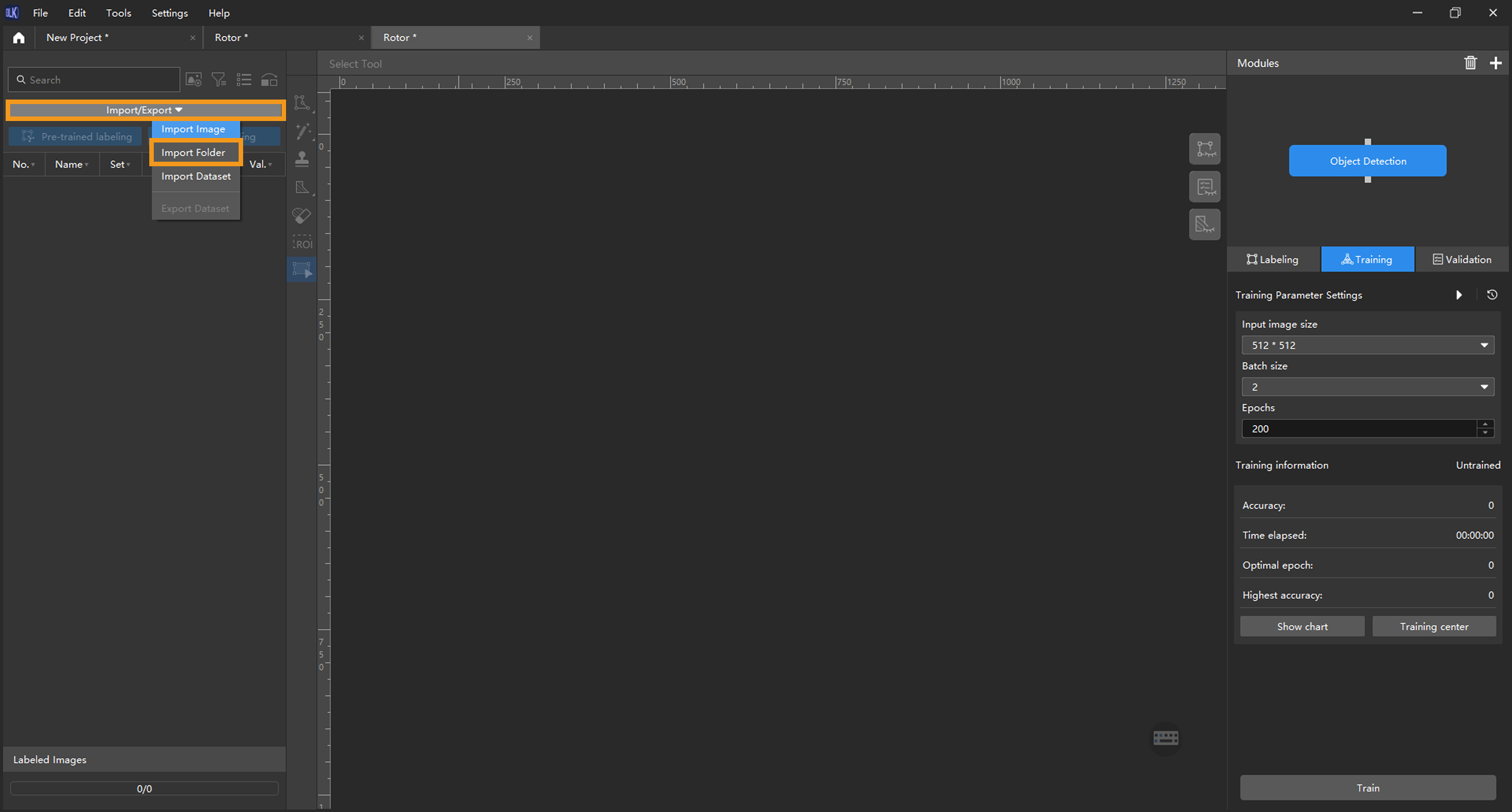

Import the image data of rotors: Unzip the downloaded data file. Click the Import/Export button in the upper left corner, select Import Folder, and import the image data.

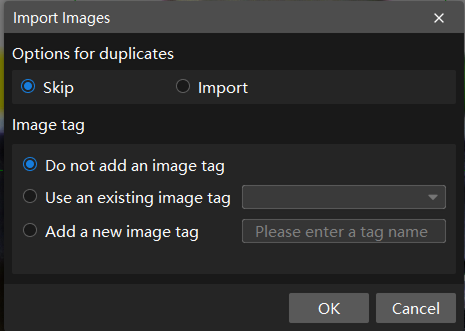

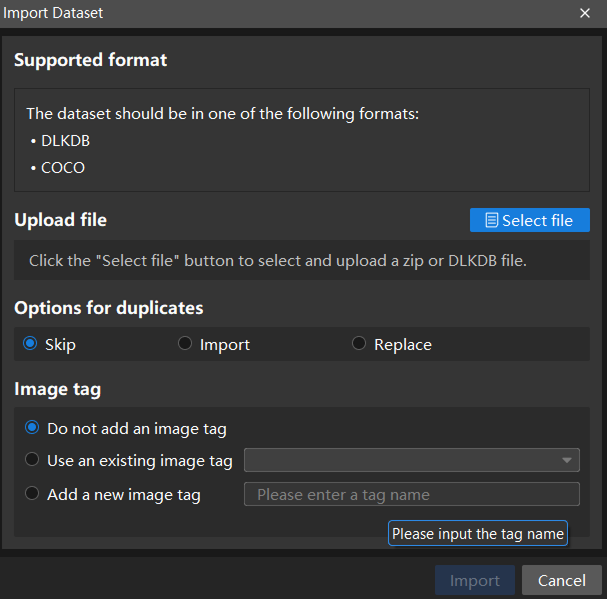

If duplicate images are detected in the image data, you can choose to skip, import, or set an tag for them in the pop-up Import Images dialog box. Since each image supports only one tag, adding a new tag to an already tagged image will overwrite the existing tag. When importing a dataset, you can choose whether to replace duplicate images.

-

Dialog box for the Import Images or Import Folder option:

-

Dialog box for the Import Dataset option:

When you select Import Dataset, you can import datasets in the DLKDB format (.dlkdb) and the COCO format. Click here to download the example dataset.

-

-

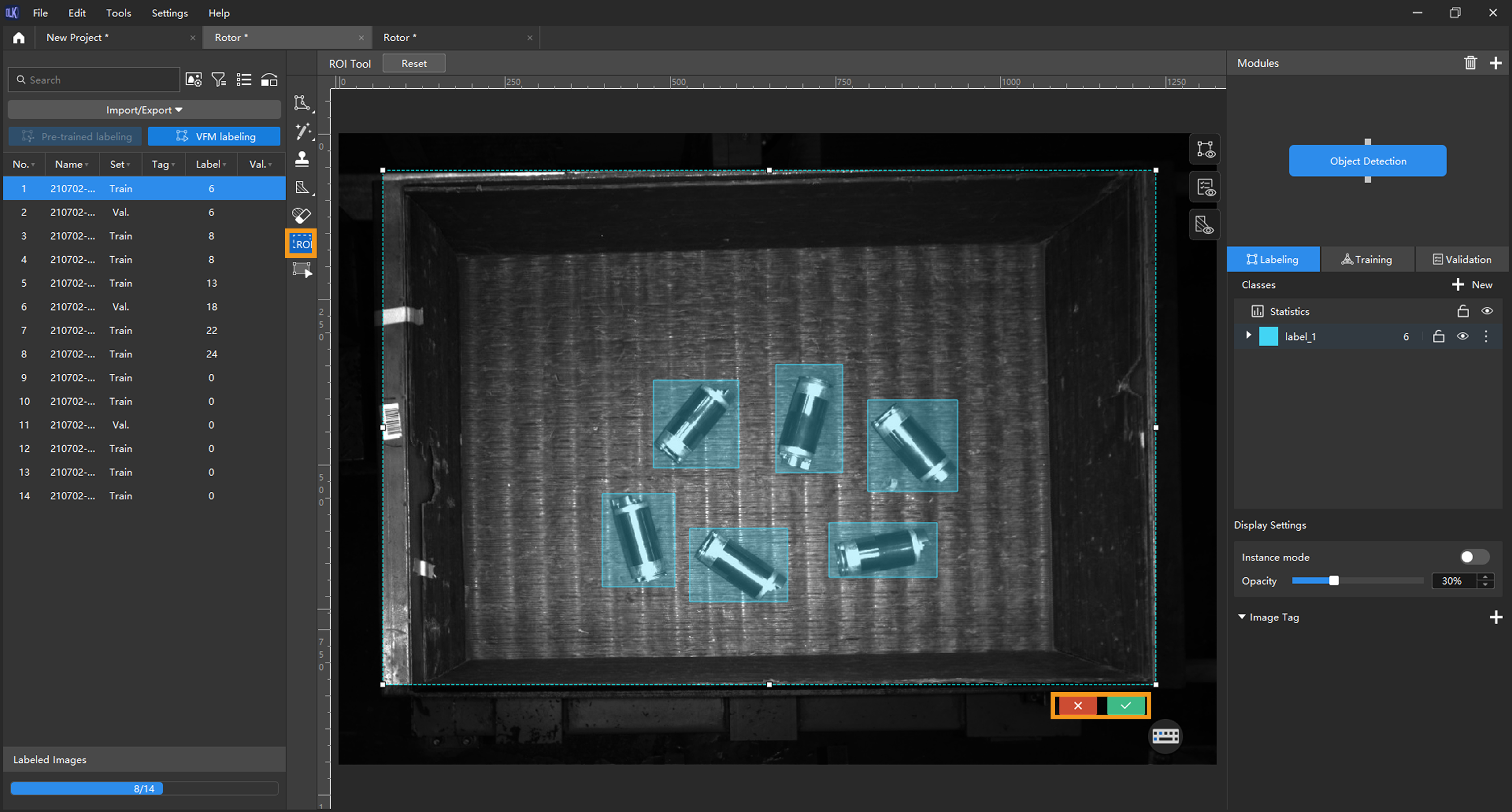

Select an ROI: Click the ROI Tool button

and adjust the frame to select the bin containing rotors in the image as an ROI. Then, click the

and adjust the frame to select the bin containing rotors in the image as an ROI. Then, click the  button in the lower right corner of the ROI to save the settings. Setting the ROI can avoid interferences from the background and reduce processing time.

button in the lower right corner of the ROI to save the settings. Setting the ROI can avoid interferences from the background and reduce processing time.

-

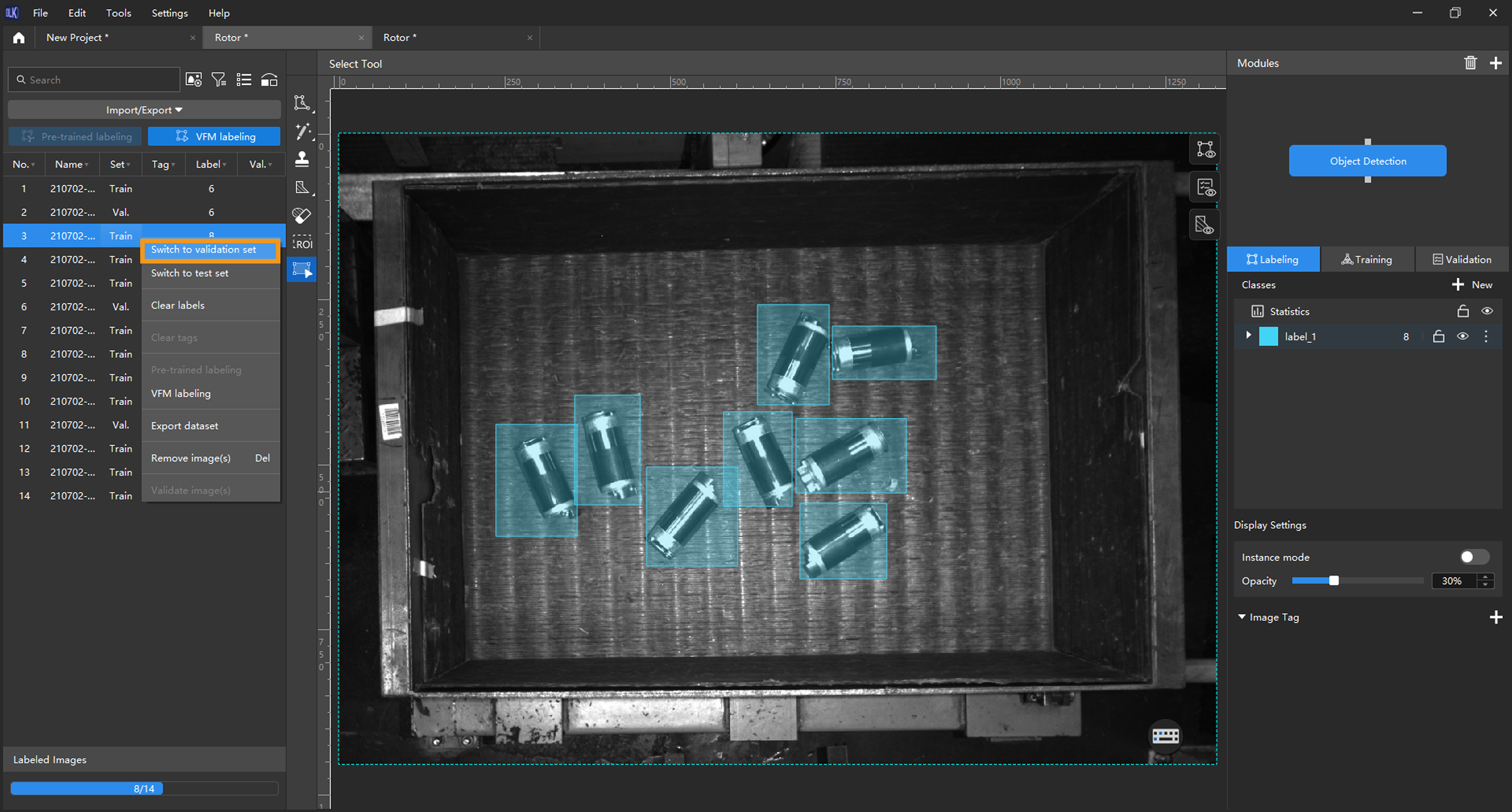

Split the dataset into the training set and validation set: By default, 80% of the images in the dataset will be split into the training set, and the rest 20% will be split into the validation set. You can click

and drag the slider to adjust the proportion. Please make sure that both the training set and validation set include objects of all classes to be detected. If the default training set and validation set cannot meet this requirement, please right-click the name of the image and then select Switch to training set or Switch to validation set to adjust the set to which the image belongs.

and drag the slider to adjust the proportion. Please make sure that both the training set and validation set include objects of all classes to be detected. If the default training set and validation set cannot meet this requirement, please right-click the name of the image and then select Switch to training set or Switch to validation set to adjust the set to which the image belongs.

-

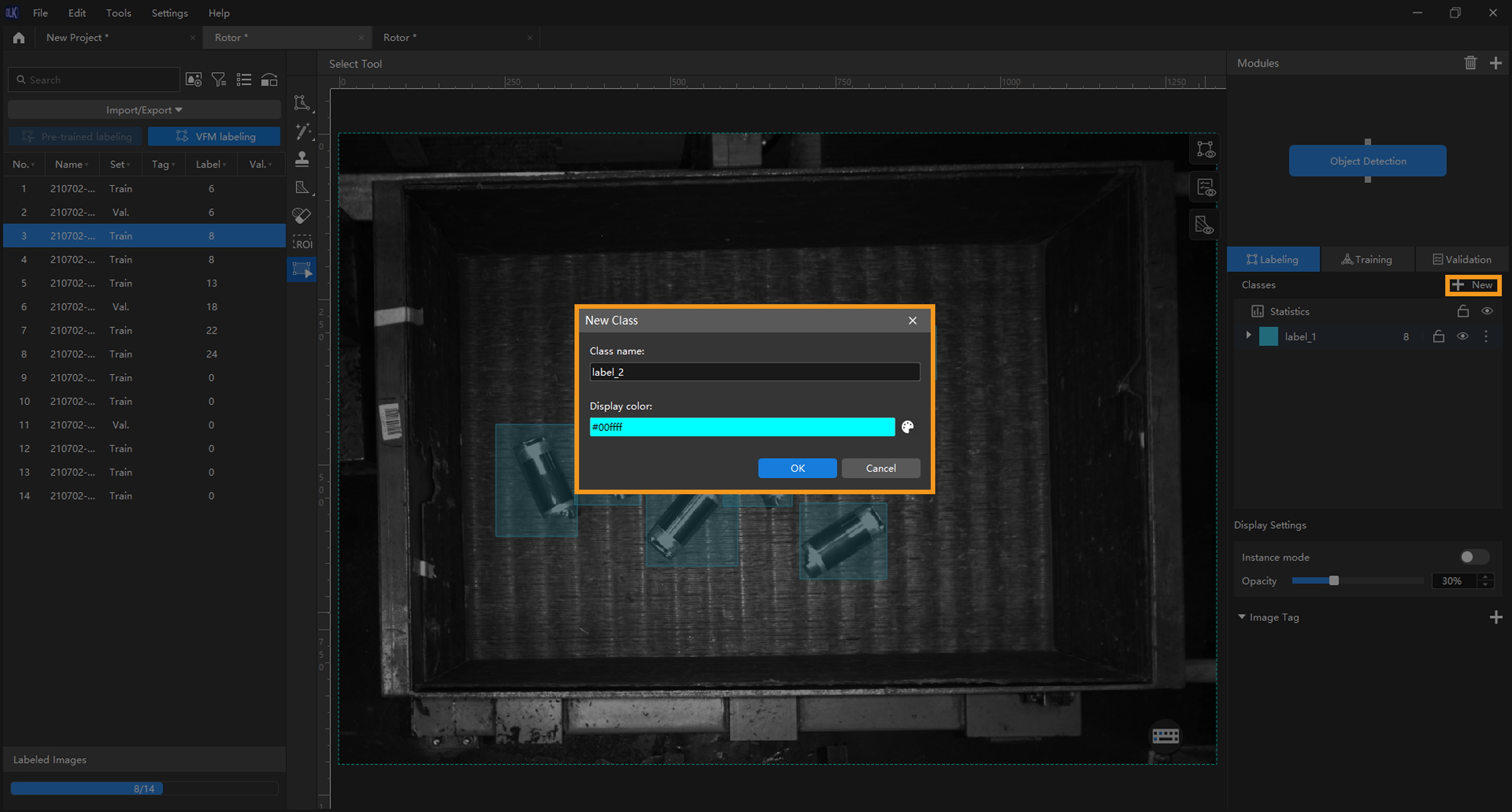

Create classes: Create classes based on the types or characteristics of different objects. In this example, the classes are named after the rotors.

When you select a class, you can right-click the class and select Merge Into to change the data in the current class to another class. If you perform the Merge Into operation after you trained the model, it is recommended that you train the model again.

-

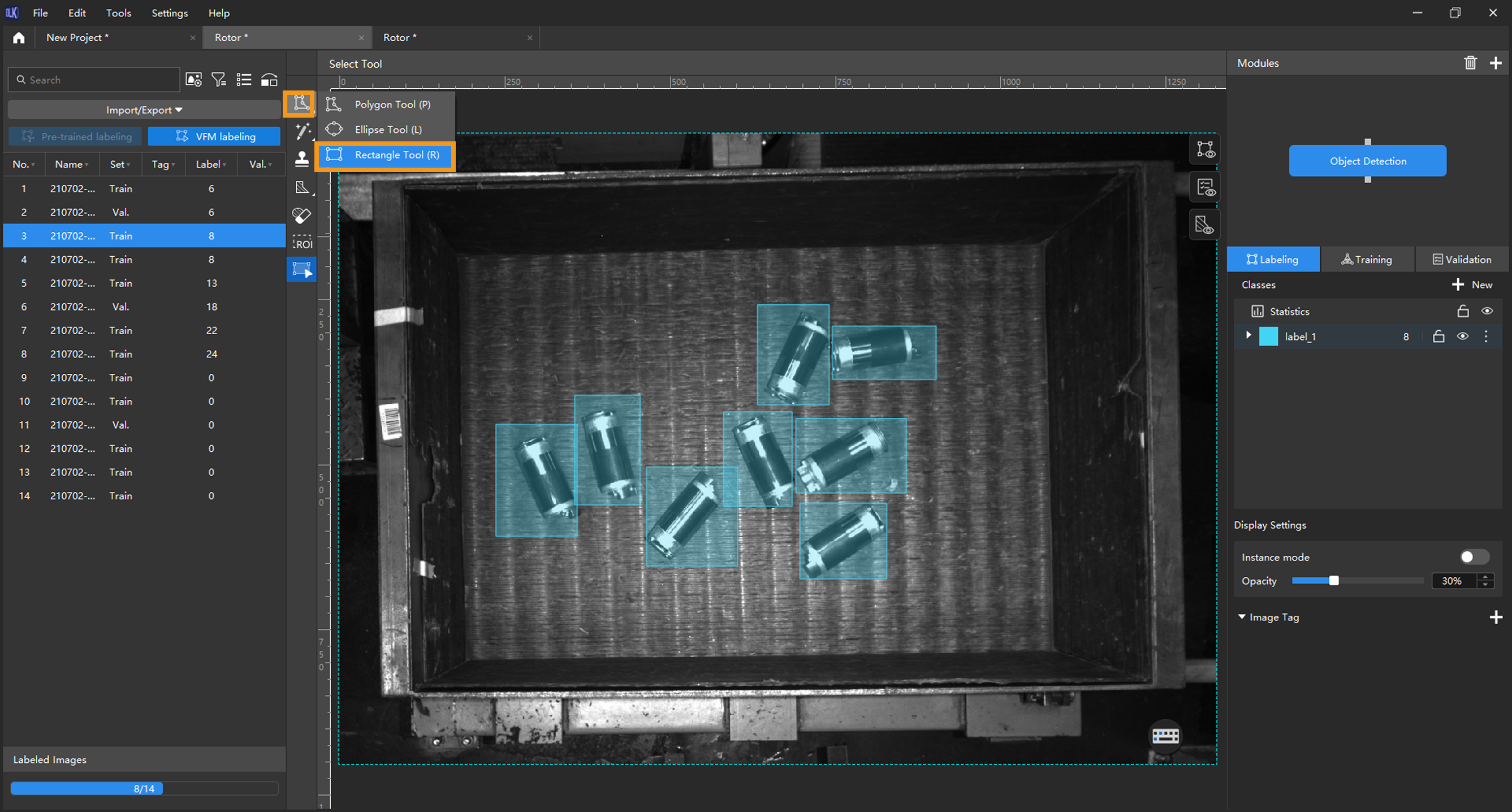

Label images: Make rectangular selections on the images to label all the rotors. Please select the rotors as precisely as possible and avoid including irrelevant areas. Inaccurate labeling will affect the training result of the model. Click here to view how to use labeling tools.

-

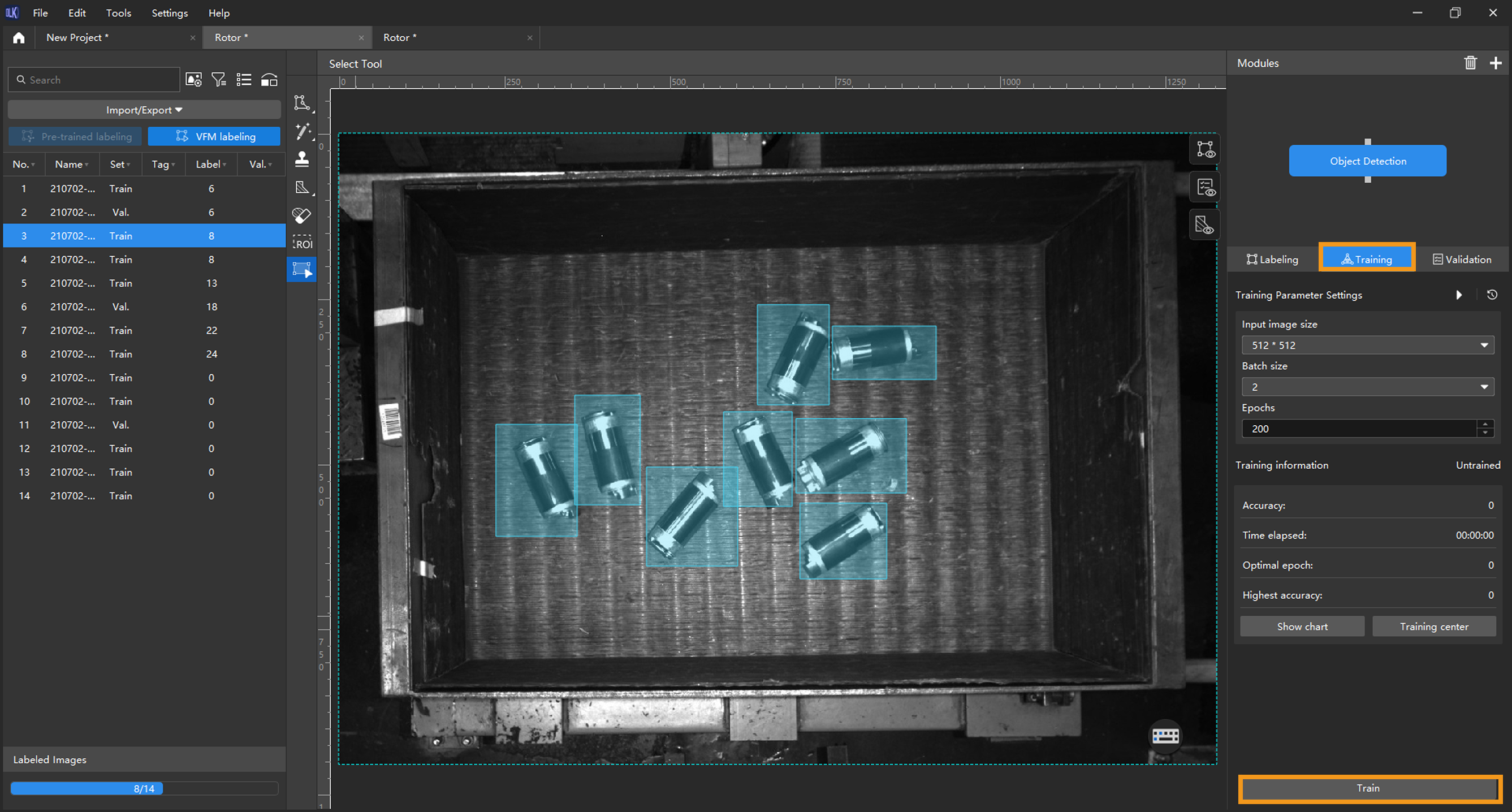

Train the model: Keep the default training parameter settings and click Train to start training the model.

-

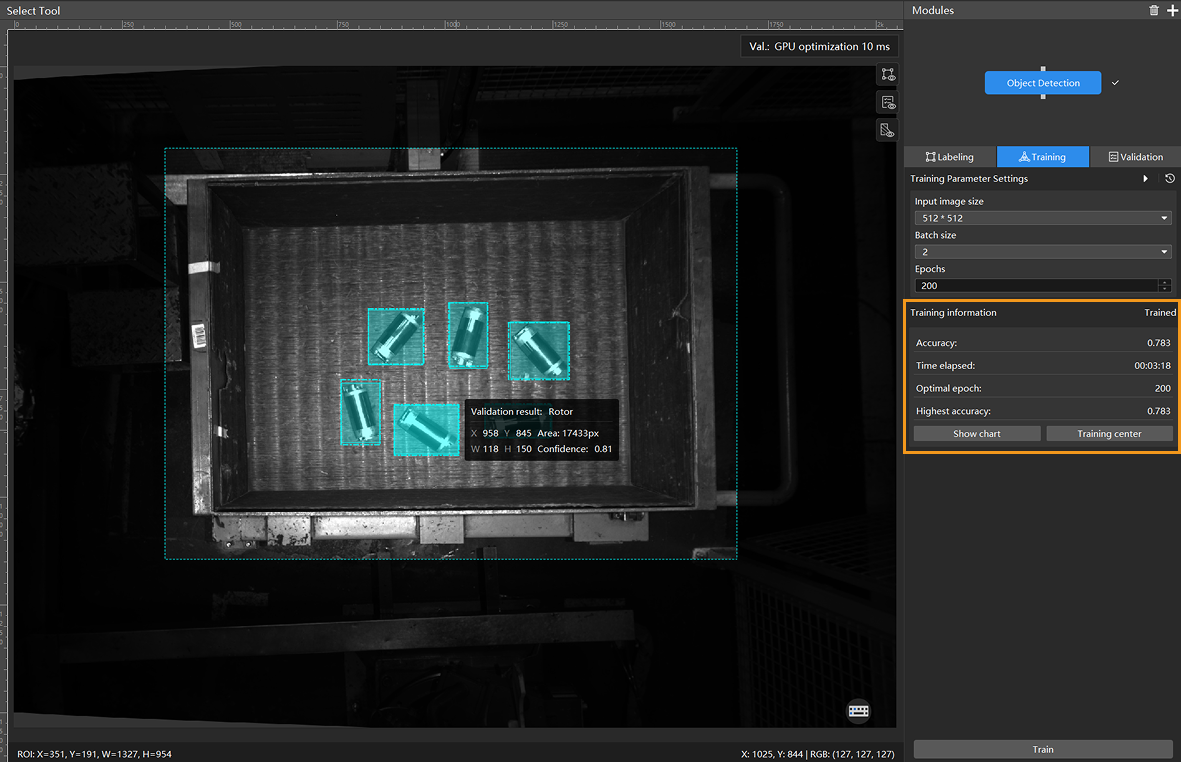

Monitor training progress through training information: On the Training tab, the training information panel allows you to view real-time model training details.

-

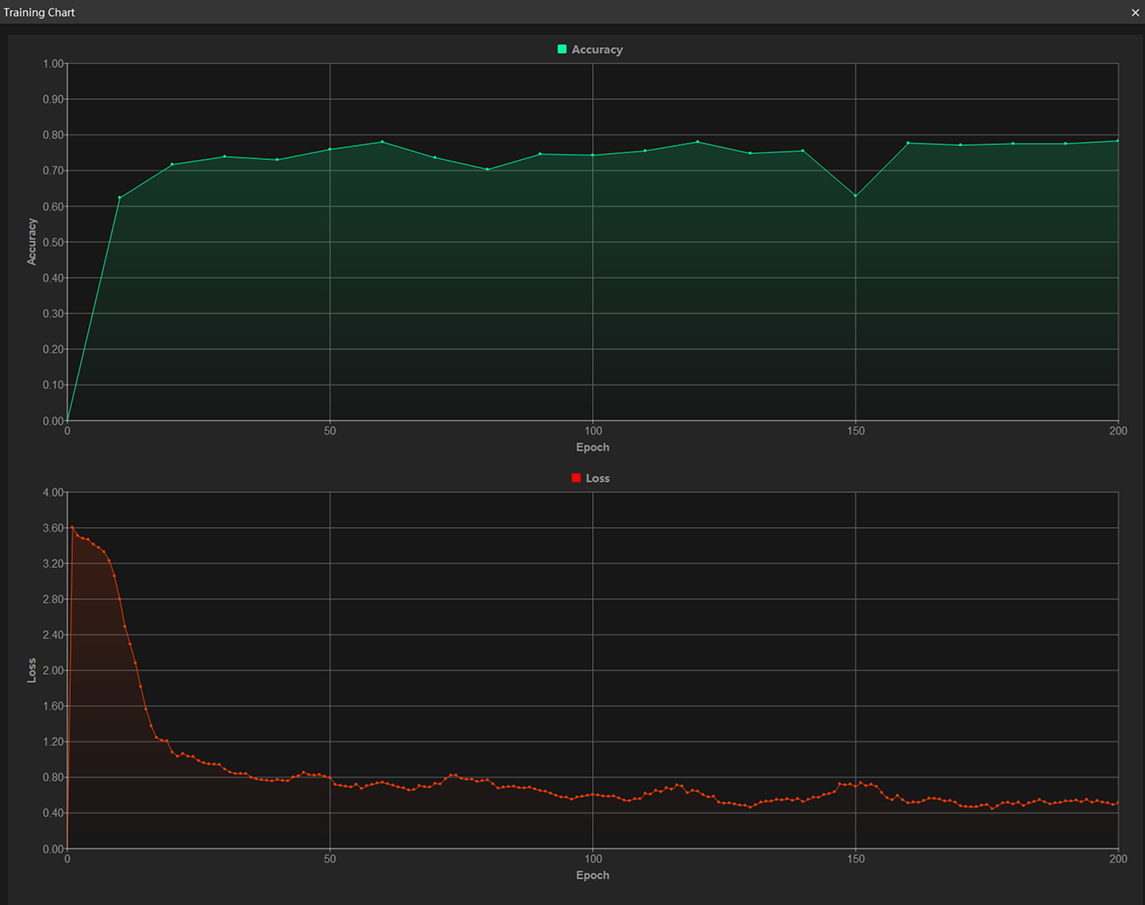

View training progress through the Training Chart window: Click the Show chart button under the Training tab to view real-time changes in the model’s accuracy and loss curves during training. An overall upward trend in the accuracy curve and a downward trend in the loss curve indicate that the current training is running properly.

-

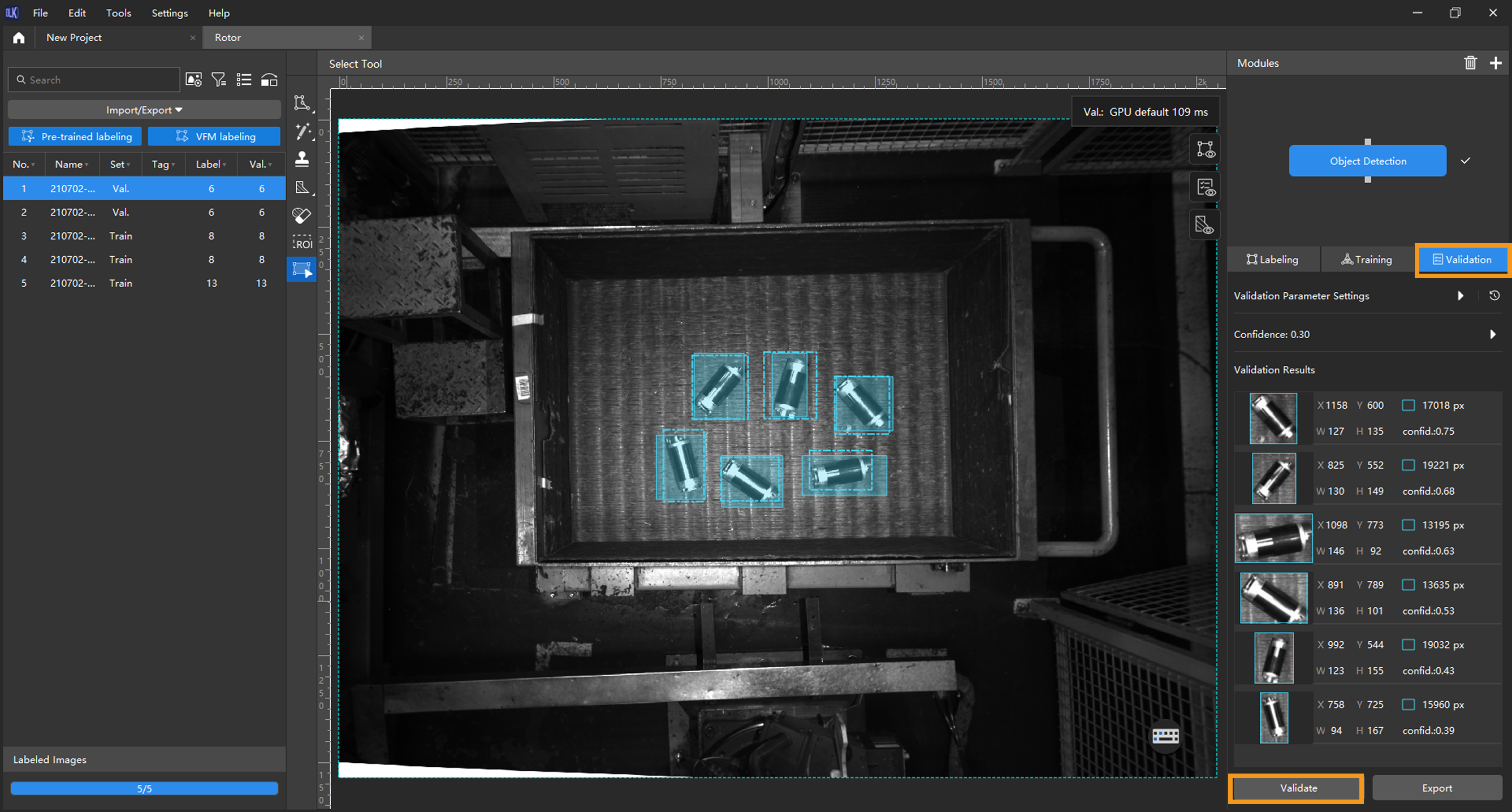

Validate the model: After the training is completed, click Validate to validate the model and check the results.

After you validate a model, you can import new image data to the current module and use the pre-trained labeling feature to perform auto-labeling based on this model. For more information, see Pre-trained labeling.

-

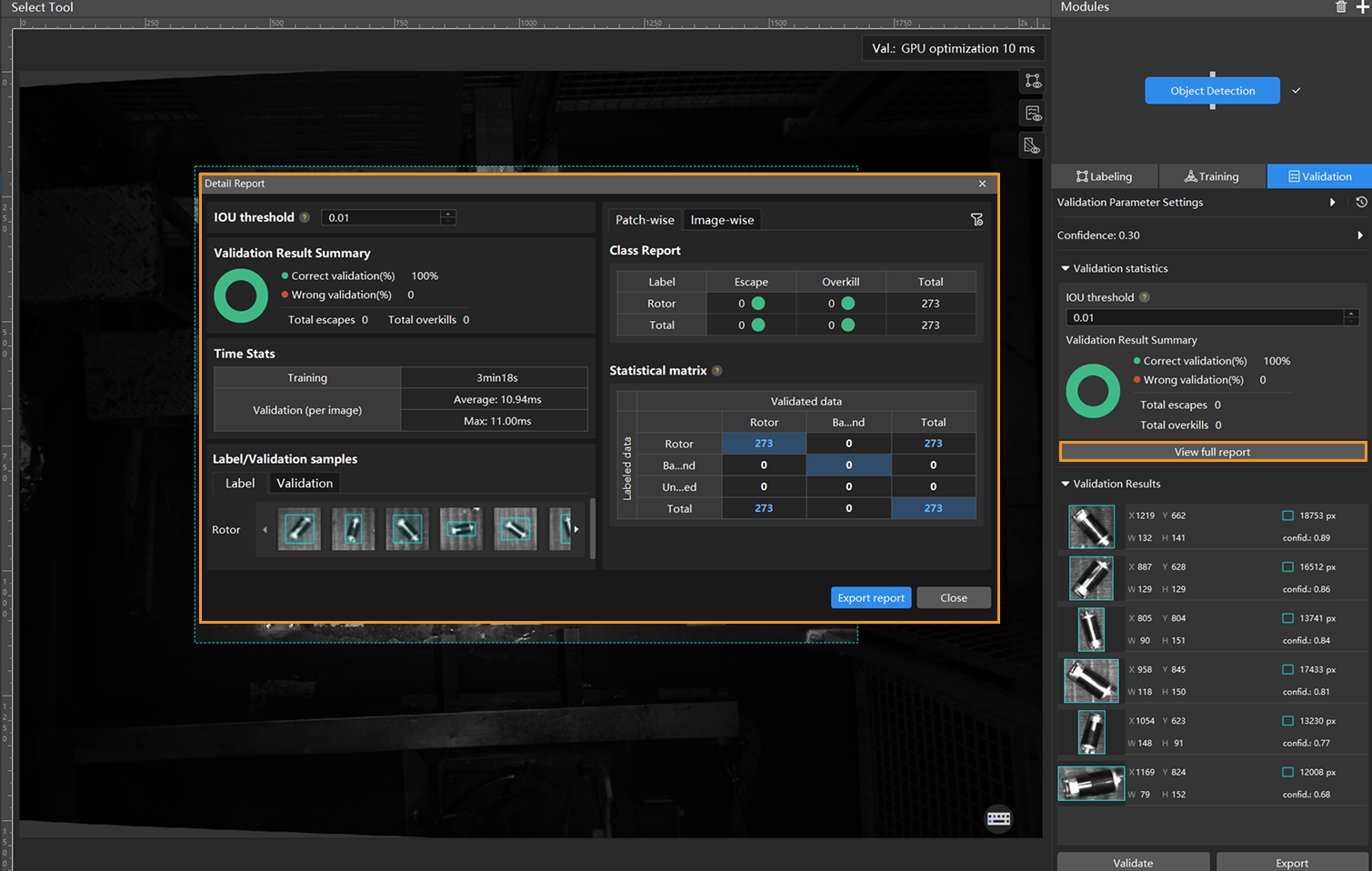

Check the model’s validation results in the training set: After validation is complete, you can view the validation result quantity statistics in the Validation statistics section under the Validation tab.

-

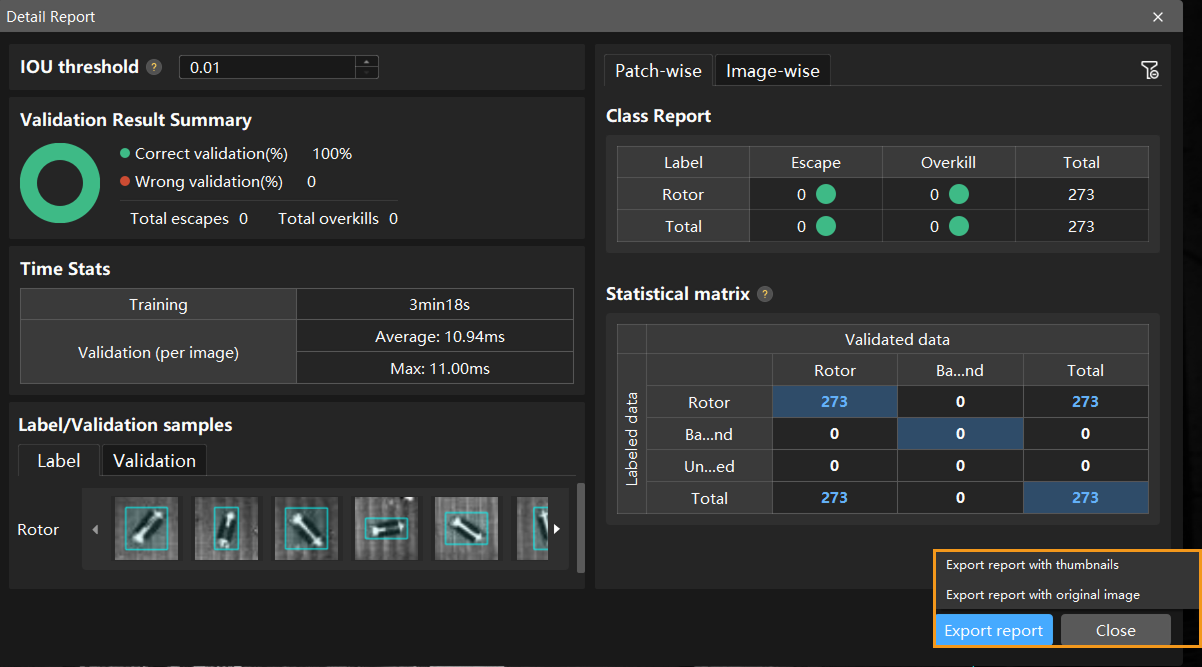

Click the View full report button to open the Detailed Report window and view detailed validation statistics.

-

The Statistical matrix in the report shows the correspondence between the validated data and labeled data of the model, allowing you to assess how well each class is matched by the model.

-

In the matrix, the vertical axis represents labeled data, and the horizontal axis represents predicted results. Blue cells indicate matches between predictions and labels, while the other cells represent mismatches, which can provide insights for model optimization.

-

Clicking a value in the matrix will automatically filter the image list in the main interface to display only the images corresponding to the selected value.

If the validation results on the training set show missed or incorrect detections, it indicates that the model training performance is unsatisfactory. Please check the labels, adjust the training parameter settings, and restart the training. You can also click the Export report button at the bottom-right corner of the Detailed Report window to choose between exporting a thumbnail report or a full-image report.

You don’t need to label and move all images with missed or incorrect detections in the test set into the training set. You can label a portion of the images, add them to the training set, then retrain and validate the model. Use the remaining images as a reference to observe the validation results and evaluate the effectiveness of the model iteration. -

-

Restart training: After adding newly labeled images to the training set, click the Train button to restart training.

-

Recheck model validation results: After training is complete, click the Validate button again to validate the model and review the validation results on each dataset.

-

Fine-tune the model (optional): You can enable developer mode and turn on Finetune in the Training Parameter Settings dialog box. For more information, see Iterate a Model.

-

Continuously optimize the model: Repeat the above steps to continuously improve model performance until it meets the requirements.

-

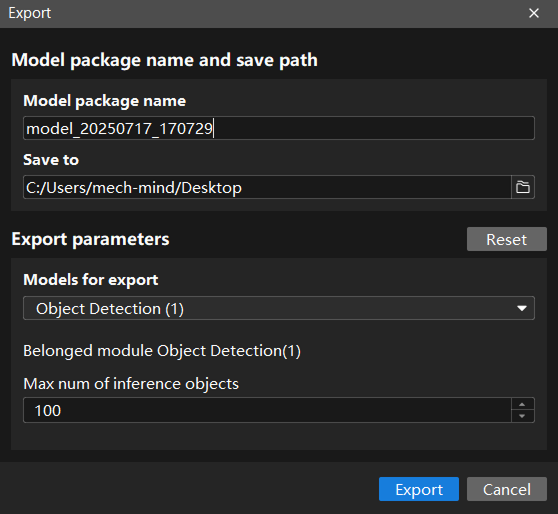

Export the model: Click Export. Then, set Max num of inference objects in the pop-up dialog box, select a directory to save the exported model, and click Export.

The maximum number of objects during a round of inference, which is 100 by default.

The exported model can be used in Mech-Vision, Mech-DLK SDK and Mech-MSR. Click here to view the details.