Solution Deployment

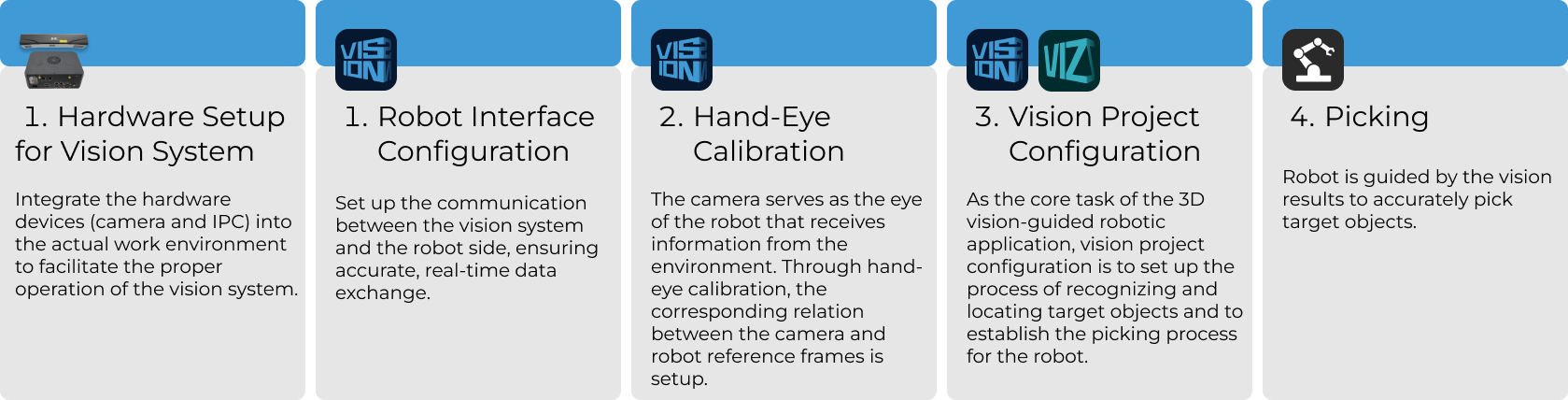

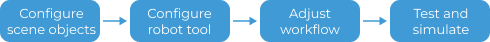

This section introduces the deployment of the Small Metal Parts in Deep Bin solution. The overall process is shown in the figure below.

Vision System Hardware Setup

Vision system hardware setup refers to integrating the hardware (camera and industrial PC) into the actual environment to support the normal operation of the vision system.

In this phase, you need to install and set up the hardware of the vision system. For details, refer to Vision System Hardware Setup.

Robot Communication Configuration

Before configuring robot communication, it is necessary to obtain the solution first. Click here to see how to obtain the solution.

-

Open Mech-Vision.

-

In the Welcome interface of Mech-Vision, click Create from Solution Library to open the Solution Library.

-

Enter the Typical cases category in the Solution Library, click the

icon in the upper right corner for more resources, and then click the Confirm button in the pop-up window.

icon in the upper right corner for more resources, and then click the Confirm button in the pop-up window. -

After acquiring the solution resources, select the Small Metal Parts in Deep Bin solution under the Randomly-stacked part picking category, fill in the Solution name and Path at the bottom, and finally click the Create button. Then, click the Confirm button in the pop-up window to download the Small Metal Parts in Deep Bin solution.

Once the solution is downloaded, it will be automatically opened in Mech-Vision.

Before deploying a vision solution, you need to set up the communication between the Mech-Mind Vision System and the robot side (robot, PLC, or host computer).

The Small Metal Parts in Deep Bin solution uses Standard Interface communication. For detailed instructions, please refer to Standard Interface Communication Configuration.

Hand-Eye Calibration

Hand-eye calibration establishes the transformation relationship between the camera and robot reference frames. With this relationship, the object pose determined by the vision system can be transformed into that in the robot reference frame, which guides the robot to perform its tasks.

Please refer to Robot Hand-Eye Calibration Guide and complete the hand-eye calibration.

|

Every time the camera is mounted, or the relative position of the camera and the robot changes after calibration, it is necessary to perform hand-eye calibration again. |

Vision Project Configuration

After completing the communication configuration and hand-eye calibration, you can use Mech-Vision to configure the vision project.

This solution consists of two projects: Small Metal Parts in Deep Bin and Small Metal Parts on Repositioning Station.

The Small Metal Parts in Deep Bin project is used to recognize and output poses of small metal parts in deep bins.

The Small Metal Parts on Repositioning Station project is used to recognize and output the poses of small metal parts on the repositioning station.

The following sections introduce the two projects respectively.

Small Metal Parts in Deep Bin

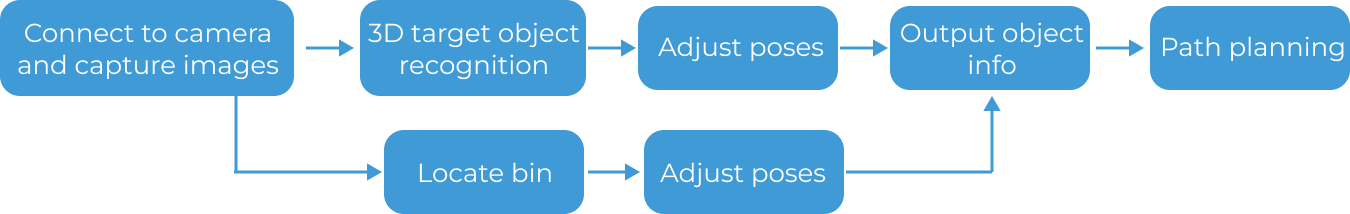

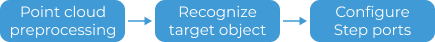

The process of how to configure this vision project is shown in the figure below.

Connect to the Camera and Capture Images

-

Connect to the camera.

Open Mech-Eye Viewer, find the camera to be connected, and click the Connect button.

-

Adjust camera parameters.

To ensure that the captured 2D image is clear and the point cloud is intact, you need to adjust the camera parameters. For detailed instructions, please refer to PRO M Camera Parameter Reference.

-

Capture images.

After the camera is successfully connected and the parameter group is set, you can start capturing the target object images. Click the

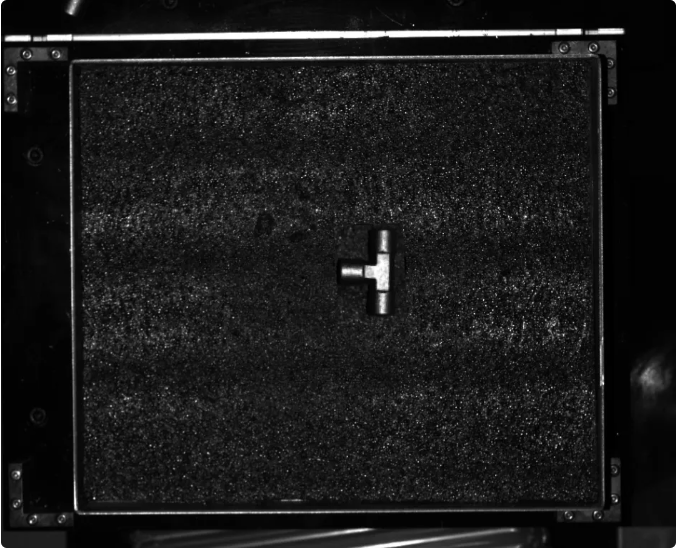

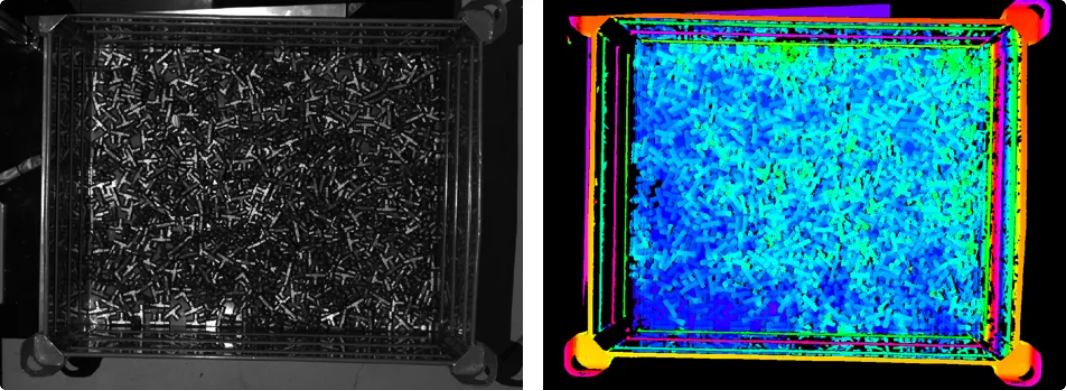

button on the top to capture a single image. At this time, you can view the captured 2D image and point cloud of the target object. Ensure that the 2D image is clear, the point cloud is intact, and the edges are clear. The qualified 2D image and point cloud of the target object are shown on the left and right in the figure below respectively.

button on the top to capture a single image. At this time, you can view the captured 2D image and point cloud of the target object. Ensure that the 2D image is clear, the point cloud is intact, and the edges are clear. The qualified 2D image and point cloud of the target object are shown on the left and right in the figure below respectively.

-

Connect to the camera in Mech-Vision.

Select the Capture Images from Camera Step, disable the Virtual Mode option, and click the Select camera button. In the pop-up window, click the

icon on the right of the camera serial number. When the icon turns into

icon on the right of the camera serial number. When the icon turns into  , the camera is connected successfully. After the camera is connected successfully, you can select the camera calibration parameter group in the drop-down list on the right.

, the camera is connected successfully. After the camera is connected successfully, you can select the camera calibration parameter group in the drop-down list on the right.Now that you have connected to the real camera, you do not need to adjust other parameters. Click the

icon on the Capture Images from Camera Step to run the Step. If there is no error, the camera is connected successfully and the images can be captured properly.

icon on the Capture Images from Camera Step to run the Step. If there is no error, the camera is connected successfully and the images can be captured properly.

3D Target Object Recognition (to Recognize Target Object)

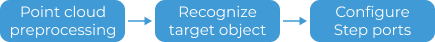

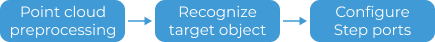

The Small Metal Parts in Deep Bin solution uses the 3D Target Object Recognition Step to recognize target objects. Click the Config wizard button in the Step Parameters panel of the 3D Target Object Recognition Step to open the 3D Target Object Recognition tool to configure relevant settings. The overall configuration process is shown in the figure below.

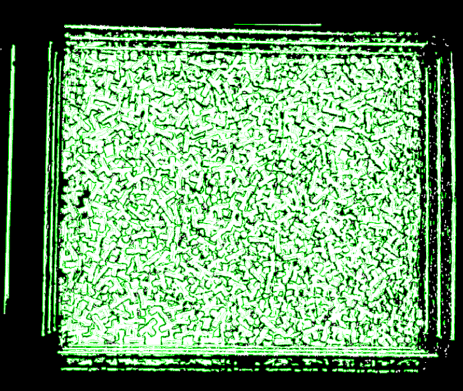

Point Cloud Preprocessing

Before point cloud preprocessing, you need to preprocess the data by adjusting the parameters to make the original point cloud clearer, thus improving the recognition accuracy and efficiency.

-

Set an effective recognition area to block out interference factors and improve recognition efficiency.

-

Set the Edge extraction effect, Noise removal level, and Point filter parameters to remove noise.

After point cloud preprocessing, click the Run Step button.

Recognize Target Object

After point cloud preprocessing, you need to create a point cloud model for the target object in the Target Object Editor, and then set matching parameters in the 3D Target Object Recognition tool for point cloud model matching.

-

Create a target object model.

-

Create point cloud model and add the pick point. Click the Open target object editor button to open the editor, import the STL file to generate a point cloud model for the target object.

-

-

Set parameters related to object recognition.

-

Enable Advanced mode on the right side of Recognize target object.

-

Matching mode: Enable Auto-set matching mode. Once enabled, this Step will automatically adjust the parameters under Coarse matching Settings and Fine matching settings.

-

Coarse matching settings: To reduce the time consumed by matching, set the Performance mode to Custom and set the Expected point count of model to 200 to improve recognition speed.

-

Confidence settings: To improve the recognition accuracy, set the Confidence strategy to Manual and adjust the following parameters:

-

Set the Joint scoring strategy to Consider both surface and edge.

-

Set Surface matching confidence threshold to 0.5000 and Edge matching confidence threshold to 0.1000.

-

In the recognition result section at the bottom of the left-side visualization window, select Output result from the first drop-down menu. Target objects with both Surface matching confidence and Edge matching confidence values exceeding the set threshold will be retained. Please check the recognition result according to the actual situation. If there is a false recognition or false negative situation, raise or lower the respective threshold.

-

When adjusting the Confidence strategy parameter, first check the recognition result with it set to Auto. If the recognition result still does not meet on-site requirements after adjusting the Confidence threshold, and there is a significant discrepancy between the Surface matching confidence and Edge matching confidence values shown at the bottom of the visualization window, it is recommended to set the Confidence strategy to Manual and adjust the relevant parameters.

-

-

-

Output—Max outputs: Minimize the number of outputs to reduce matching time, while ensuring that path planning requirements are met. In this solution, the Max outputs parameter is set to 30.

-

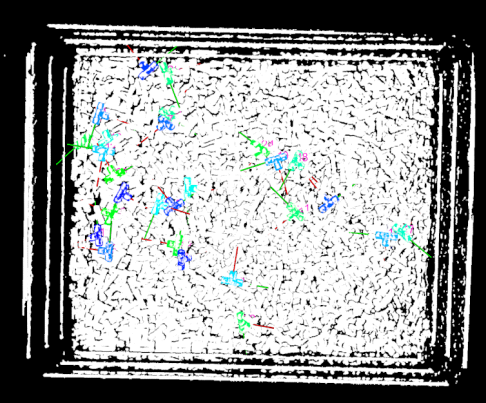

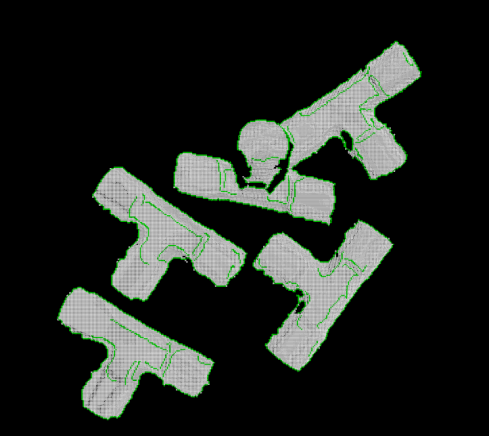

After setting the above parameters, click the Run Step button. The matching result is shown in the figure below.

Configure Step Ports

After target object recognition, Step ports should be configured to provide vision results and point clouds for Mech-Viz for path planning and collision detection.

To ensure that objects can be successfully picked by the robot, you need to adjust the center point of the target object so that its Z-axis points upwards. Under Select port, select Port(s) related to object center point. To recognize and locate the bin, select the Preprocessed point cloud option. Finally, click the Save button. New output ports are added to the 3D Target Object Recognition Step.

3D Target Object Recognition (to Recognize Bin)

This solution uses the 3D Target Object Recognition Step to recognize the bin. Click the Config wizard button in the Step Parameters panel of the 3D Target Object Recognition Step to open the 3D Target Object Recognition tool to configure relevant settings. The overall configuration process is shown in the figure below.

Point Cloud Preprocessing

Before point cloud preprocessing, you need to preprocess the data by adjusting the parameters to make the original point cloud clearer, thus improving the recognition accuracy and efficiency.

-

Set an effective recognition area to block out interference factors and improve recognition efficiency.

-

Set the Edge extraction effect, Noise removal level, and Point filter parameters to remove noise.

After point cloud preprocessing, click the Run Step button.

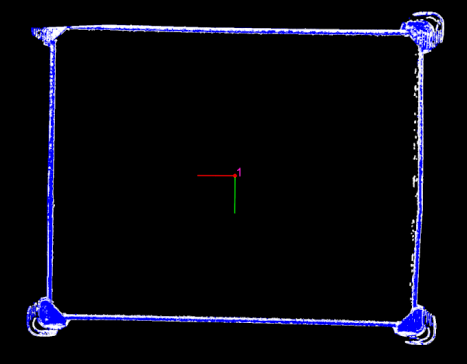

Recognize Target Object

After point cloud preprocessing, you need to create a point cloud model for the bin in the Target Object Editor, and then set matching parameters in the 3D Target Object Recognition tool for point cloud model matching.

-

Create a target object model.

Create point cloud model and add the pick point. Click the Open target object editor button to open the editor, generate a point cloud model based on the point cloud acquired by the camera.

-

Set parameters related to object recognition.

-

Matching mode: Enable Auto-set matching mode.

-

Confidence settings: Set the Confidence threshold to 0.8 to remove incorrect matching results.

-

Output—Max outputs: Since the target object is a bin, set the Max outputs to 1.

-

After setting the above parameters, click the Run Step button. The matching result is shown in the figure below.

Configure Step Ports

After target object recognition, Step ports should be configured to provide vision results and point clouds for Mech-Viz for path planning and collision detection.

To obtain the position information of the real bin, select the Port(s) related to object center point option under Select port, and click the Save button. New output ports are added to the 3D Target Object Recognition Step.

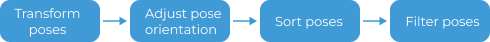

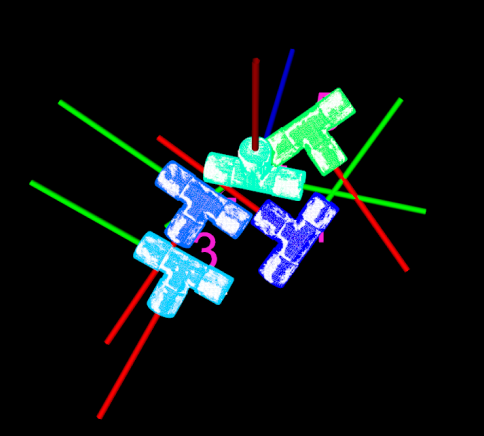

Adjust Poses (Target Object Poses)

After obtaining the target object poses, you need to use the Adjust Poses V2 Step to adjust the poses. Click the Config wizard button in the Step Parameters panel of the Adjust Poses V2 Step to open the pose adjustment tool for pose adjustment configuration. The overall configuration process is shown in the figure below.

-

To output the target object poses in the robot reference frame, please select the checkbox before Transform pose to robot reference frame to transform the poses from the camera frame to the robot frame.

-

Adjust the target object pose so that its Z-axis points upward and its Y-axis points to the negative X-axis of the robot reference frame, which enables the robot to pick target objects in the specified direction to avoid collisions.

-

Enable Custom mode for Pose adjustment.

-

Set the parameters of the Flip pose and minimize the angle between the flip axis and target direction option: Set Axis to be fixed to X-axis, set Axis to be flipped to Z-axis, and set the target direction to Positive Z-direction in the robot reference frame.

-

Set the parameters of the Rotate pose and minimize the angle between the rotation axis and target direction option: Set Axis to be fixed to Z-axis, set Axis to be flipped to Y-axis, and set the target direction to Negative X-direction in the robot reference frame.

-

-

Set the Sorting type to Sort by X/Y/Z value of pose, set Specified value of the pose to Z-coordinate, and sort the poses in Descending order.

-

To reduce the time required for subsequent path planning, target objects that cannot be easily picked need to be filtered based on the angle between the Z-axis of the pose and the reference direction. In this tutorial, you need to set the Max angle difference to 90°.

Set an ROI to filter out poses outside the ROI.

-

General settings.

Set number of new ports to 1, and a new input and output port will be added to the Step. Connect the input port to the Target Object Names output port of the 3D Target Object Recognition Step and connect the output port to the Output Step.

Adjust Poses (Bin Poses)

After obtaining the bin pose, you need to use the Adjust Poses V2 Step to adjust the pose. Click the Config wizard button in the Step Parameters panel of the Adjust Poses V2 Step to open the pose adjustment tool for pose adjustment configuration. The overall configuration process is shown in the figure below.

-

Select pose processing strategy.

Since the target object is a deep bin holding target objects, please select the Bin option.

-

To output the bin pose in the robot reference frame, please select the checkbox before Transform pose to robot reference frame to transform the pose from the camera frame to the robot frame.

-

Translate poses along specified direction.

In the Robot reference frame, move the bin pose along the Positive Z-direction and manually adjust the Translation distance to -245 mm to move the bin pose from the top surface of the bin down to the bin center, which will be used to update the bin collision model in Mech-Viz later.

Translation distance = -1 × 1/2 Bin height -

To reduce the time required for subsequent path planning, target objects that cannot be easily picked need to be filtered based on the angle between the Z-axis of the pose and the reference direction. In this tutorial, you need to set the Max angle difference to 90°.

-

General settings.

Set number of new ports to 1, and a new input and output port will be added to the Step. Connect the input port to the Target Object Names output port of the 3D Target Object Recognition Step and connect the output port to the Output Step.

Small Metal Parts on Repositioning Station

The process of how to configure this vision project is shown in the figure below.

Connect to the Camera and Capture Images

-

Connect to the camera.

Open Mech-Eye Viewer, find the camera to be connected, and click the Connect button.

-

Adjust camera parameters.

To ensure that the captured 2D image is clear and the point cloud is intact, you need to adjust the camera parameters. For detailed instructions, please refer to PRO S Camera Parameter Reference.

-

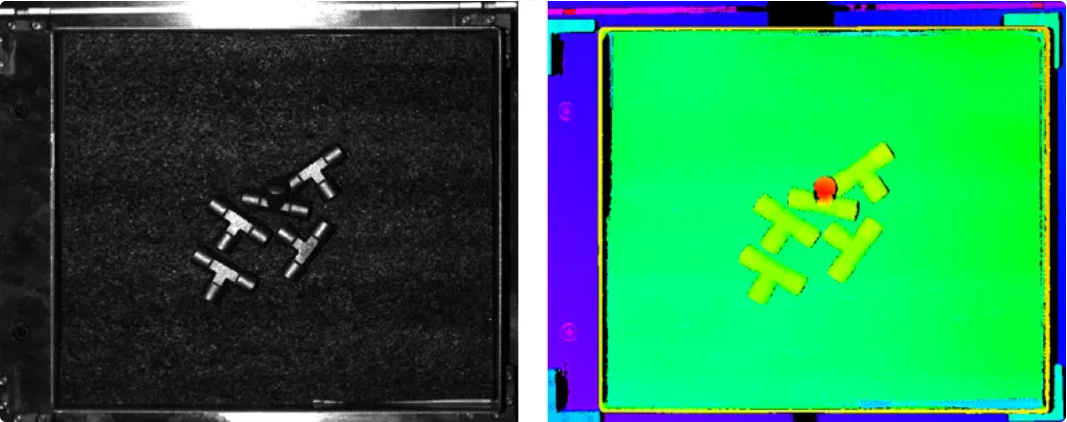

Capture images.

After the camera is successfully connected and the parameter group is set, you can start capturing the target object images. Click the

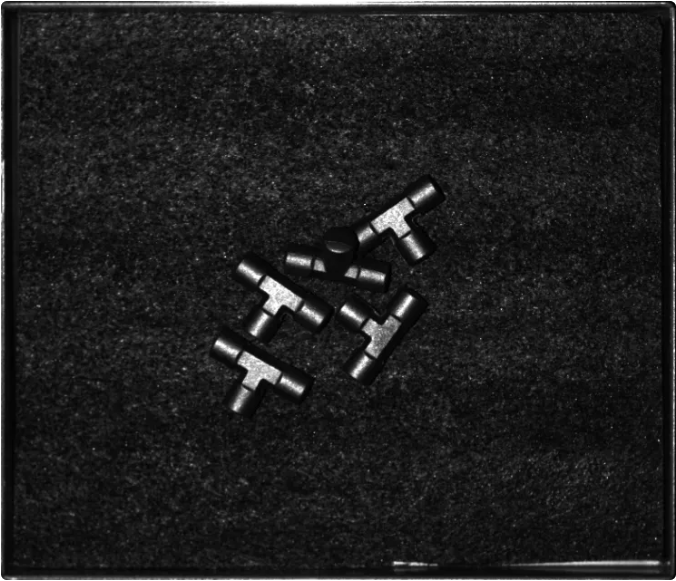

button on the top to capture a single image. At this time, you can view the captured 2D image and point cloud of the target object. Ensure that the 2D image is clear, the point cloud is intact, and the edges are clear. The qualified 2D image and point cloud of the target object are shown on the left and right in the figure below respectively.

button on the top to capture a single image. At this time, you can view the captured 2D image and point cloud of the target object. Ensure that the 2D image is clear, the point cloud is intact, and the edges are clear. The qualified 2D image and point cloud of the target object are shown on the left and right in the figure below respectively.

-

Connect to the camera in Mech-Vision.

Select the Capture Images from Camera Step, disable the Virtual Mode option, and click the Select camera button. In the pop-up window, click the

icon on the right of the camera serial number. When the icon turns into

icon on the right of the camera serial number. When the icon turns into  , the camera is connected successfully. After the camera is connected successfully, you can select the camera calibration parameter group in the drop-down list on the right.

, the camera is connected successfully. After the camera is connected successfully, you can select the camera calibration parameter group in the drop-down list on the right.Now that you have connected to the real camera, you do not need to adjust other parameters. Click the

icon on the Capture Images from Camera Step to run the Step. If there is no error, the camera is connected successfully and the images can be captured properly.

icon on the Capture Images from Camera Step to run the Step. If there is no error, the camera is connected successfully and the images can be captured properly.

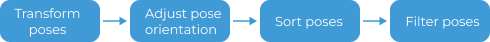

3D Target Object Recognition (to Recognize Target Object)

The Small Metal Parts in Deep Bin solution uses the 3D Target Object Recognition Step to recognize target objects. Click the Config wizard button in the Step Parameters panel of the 3D Target Object Recognition Step to open the 3D Target Object Recognition tool to configure relevant settings. The overall configuration process is shown in the figure below.

Point Cloud Preprocessing

Before point cloud preprocessing, you need to preprocess the data by adjusting the parameters to make the original point cloud clearer, thus improving the recognition accuracy and efficiency.

-

Set an effective recognition area to block out interference factors and improve recognition efficiency.

-

Set the Edge extraction effect, Noise removal level, and Point filter parameters to remove noise.

After point cloud preprocessing, click the Run Step button.

Recognize Target Object

After point cloud preprocessing, you need to create a point cloud model for the target object in the Target Object Editor, and then set matching parameters in the 3D Target Object Recognition tool for point cloud model matching.

-

Create a target object model.

-

Create point cloud model and add the pick point. Click the Open target object editor button to open the editor, generate a point cloud model based on the point cloud acquired by the camera.

-

-

Set parameters related to object recognition.

-

Enable Advanced mode on the right side of Recognize target object.

-

Matching mode: Enable Auto-set matching mode. Once enabled, this Step will automatically adjust the parameters under Coarse matching Settings and Fine matching settings.

-

Coarse matching settings: To reduce the time consumed by matching, set the Performance mode to High speed to improve recognition speed.

-

Confidence settings: To improve the recognition accuracy, set the Confidence strategy to Manual and adjust the following parameters:

-

Set the Joint scoring strategy to Consider both surface and edge.

-

Set Surface matching confidence threshold to 0.8000 and Edge matching confidence threshold to 0.4000.

-

-

Output—Max outputs: Minimize the number of outputs to reduce matching time, while ensuring that path planning requirements are met. In this solution, the Max outputs parameter is set to 10.

-

After setting the above parameters, click the Run Step button. The matching result is shown in the figure below.

Configure Step Ports

After target object recognition, Step ports should be configured to provide vision results and point clouds for Mech-Viz for path planning and collision detection.

To ensure that objects can be successfully picked by the robot, you need to adjust the center point of the target object so that its Z-axis points upwards. Under Select port, select Port(s) related to object center point. To recognize and locate the bin, select the Preprocessed point cloud option. Finally, click the Save button. New output ports are added to the 3D Target Object Recognition Step.

Adjust Poses

After obtaining the target object poses, you need to use the Adjust Poses V2 Step to adjust the poses. Click the Config wizard button in the Step Parameters panel of the Adjust Poses V2 Step to open the pose adjustment tool for pose adjustment configuration. The overall configuration process is shown in the figure below.

-

To output the target object poses in the robot reference frame, please select the checkbox before Transform pose to robot reference frame to transform the poses from the camera frame to the robot frame.

-

Set Orientation to Auto alignment and Application scenario to Align Z-axes (Machine tending) to ensure that the robot picks in a specified direction, thereby avoiding collisions.

-

Set the Sorting type to Sort by X/Y/Z value of pose, set Specified value of the pose to Z-coordinate, and sort the poses in Descending order.

-

To reduce the time required for subsequent path planning, target objects that cannot be easily picked need to be filtered based on the angle between the Z-axis of the pose and the reference direction. In this tutorial, you need to set the Max angle difference to 90°.

Set an ROI to filter out poses outside the ROI.

-

General settings.

Set number of new ports to 1, and a new input and output port will be added to the Step. Connect the input port to the Target Object Names output port of the 3D Target Object Recognition Step and connect the output port to the Output Step.

Path Planning

Once the target object recognition is complete, you can use Mech-Viz to plan a path and then write a robot program for picking the target objects.

The process of path planning configuration is shown in the figure below.

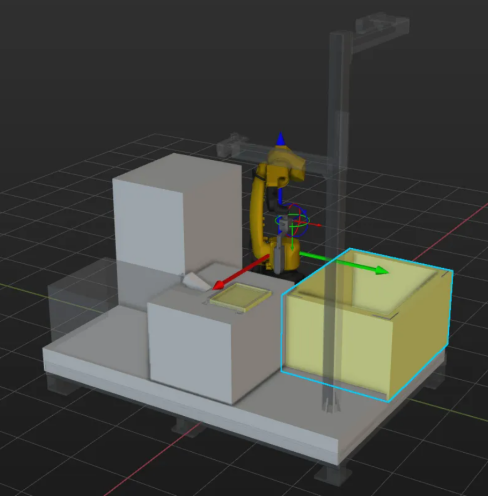

Configure Scene Objects

Scene objects are introduced to make the scene in the software closer to the real scenario, which facilitates the robot path planning. For detailed instructions, please refer to Configure Scene Objects.

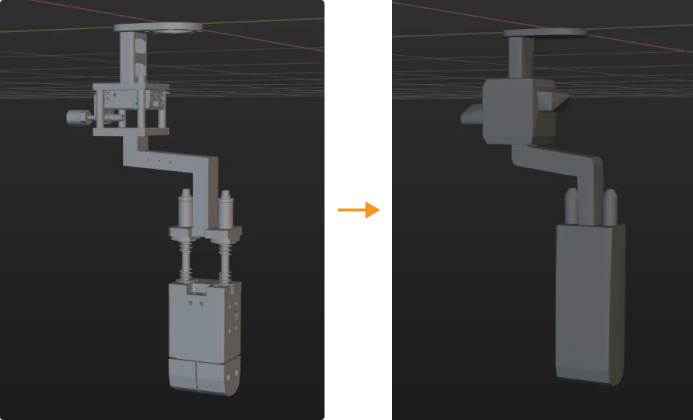

To ensure effective picking, scene objects should be configured to accurately represent the real operating environment. The scene objects in this solution are configured as shown below.

Configure Robot Tool

The end tool should be configured so that its model can be displayed in the 3D simulation area and used for collision detection. For detailed instructions, please refer to Configure Tool.

|

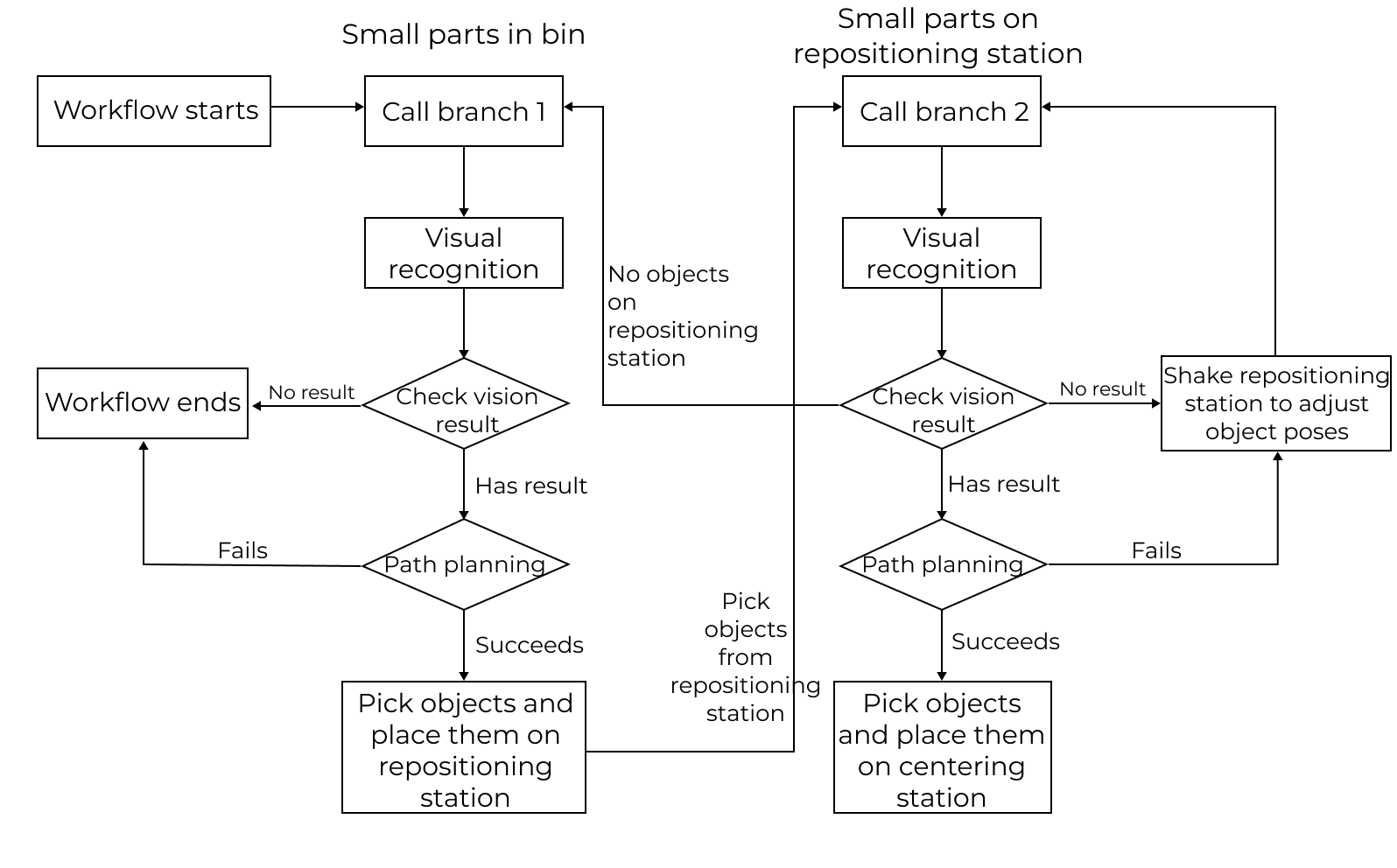

Adjust the Workflow

The workflow refers to the robot motion control program created in Mech-Viz in the form of a flowchart. After the scene objects and end tools are configured, you can adjust the project workflow according to the actual requirements. The flowchart of the logical processing when picking the target object is shown below.

The Mech-Viz project consists of the following two branches:

-

Branch 1 corresponds to the subsequent picking steps of the Small Metal Parts in Deep Bin project in Mech-Vision. Use the Visual Recognition Step to start the project, which inputs the poses of small metal parts in the deep bin to Mech-Viz for path planning and placement on the repositioning station.

-

Branch 2 corresponds to the subsequent picking steps of the Mech-Vision Small Metal Parts on Repositioning Station project in . Use the Visual Recognition Step to start the project, which inputs the poses of small metal parts on the repositioning station to Mech-Viz for path planning and placement on the centering station.

-

After all small metal parts on the repositioning station have been picked, the project will restart from branch 1 and repeat the whole process until all small metal parts are picked from the bin.

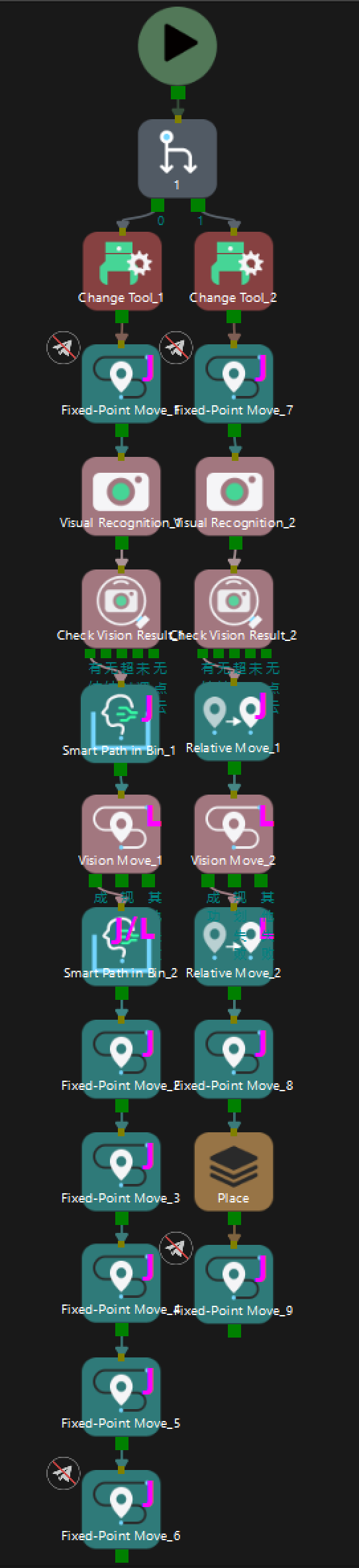

The workflow of the project is shown in the figure below.

The two Fixed-Point Move Steps at the beginning and end of the workflow are waypoints determined by robot jogging and do not need to be sent to external devices. The other move-type Steps will send waypoints to external devices, and each Smart Path in Bin Step corresponds to two waypoints. Thus, a total of 9 waypoints will be sent from branch 1 and 7 waypoints from branch 2.

Simulate and Test

Click the Simulate button on the toolbar to test whether the vision system is set up successfully by simulating the Mech-Viz project.

Place the target object randomly in the bin and click Simulate in the Mech-Viz toolbar to simulate picking the target object. After each successful picking, the target object should be rearranged, and 10 simulation tests should be conducted. If the 10 simulations all lead to successful pickings, the vision system is successfully set up.

If an exception occurs during simulation, refer to the Solution Deployment FAQs to resolve the problem.

Robot Picking and Placing

Write a Robot Program

If the simulation result meets expectations, you can write a pick-and-place program for the FANUC robot.

The example program MM_S10_Viz_Subtask for the FANUC robot can basically satisfy the requirements of this typical case. You can modify the example program. For a detailed explanation of this program, please refer to S10 Example Program Explanation.

Modification Instruction

This example program consists of two programs. The secondary program triggers the Mech-Viz project to run and obtain the planned path to pick small parts in the bin. The primary program moves the robot based on the planned path. Then, the primary program triggers the secondary program to run when the robot leaves the picking area to obtain the next planned path, shortening the cycle time. Based on the example program, please modify the program files by following these steps:

-

Secondary program

-

Add branch commands to trigger the camera to capture images.

Before modification After modification (example) 10: !trigger {product-viz} project ; 11: **CALL MM_START_VIZ(2,10)** ; 12: !get planned path, 1st argument ; 13: !(1) means getting pose in JPs ; 14: **CALL MM_GET_VIZ(1,51,52,53)** ;10: !trigger {product-viz} project ; 11: CALL MM_START_VIZ(2,10) ; 12: WAIT .10(sec) ; 13: !(Branch_Num,Exit_Num)" ; 14: CALL MM_SET_BCH(1,1) ; 15: !get planned path, 1st argument ; 16: !(1) means getting pose in JPs ; 17: CALL MM_GET_VIZ(1,51,52,53) ; -

Add the commands used to store all returned waypoints in local variables.

The planned path Mech-Viz returned contains nine waypoints: enter-bin point, approach point for picking, pick point, retreat point for picking, exit-bin point, shake point 1, shake point 2, exit-bin point, and placing point. The example program stores only three waypoints, so you need to add the commands to store the complete planned path. Before modification After modification (example) 22: CALL MM_GET_JPS(1,60,70,80) ; 23: CALL MM_GET_JPS(2,61,71,81) ; 24: CALL MM_GET_JPS(3,62,72,82) ;

25: CALL MM_GET_JPS(1,60,70,80) ; 26: CALL MM_GET_JPS(2,61,71,81) ; 27: CALL MM_GET_JPS(3,62,72,82) ; 28: CALL MM_GET_JPS(4,63,70,80) ; 29: CALL MM_GET_JPS(5,64,71,81) ; 30: CALL MM_GET_JPS(6,65,72,82) ; 31: CALL MM_GET_JPS(7,66,70,80) ; 32: CALL MM_GET_JPS(8,67,71,81) ; 33: CALL MM_GET_JPS(9,68,72,82) ;

-

-

Primary program

-

Set the tool reference frame. Verify that the TCP on the robot teach pendant matches the TCP in Mech-Viz. Set the currently selected tool frame number to the one corresponding to the reference frame of the actual tool in use.

Before modification After modification (example) 9: !set current tool NO. to 1 ; 10: UTOOL_NUM=1 ;

9: !set current tool NO. to 3 ; 10: UTOOL_NUM=3 ;

Please replace the number with the number of the actual tool being used, where “3” is an example only. -

Specify the IP address and port number of the IPC. Change the IP address and port number in the CALL MM_INIT_SKT command to those in the vision system.

Before modification After modification (example) 16: CALL MM_INIT_SKT('8','127.0.0.1',50000,5) ;16: CALL MM_INIT_SKT('8','127.1.1.2',50000,6) ; -

Adjust the position register IDs for the enter-bin point, approach point for picking, pick point, retreat point for picking, exit-bin point, shake point 1, shake point 2, exit-bin point, and the placing point.

Before modification After modification 32: !move to approach waypoint ; 33: !of picking ; 34:J PR[60] 50% FINE ; 35: !move to picking waypoint ; 36:J PR[61] 10% FINE ; ... 40: !move to departure waypoint ; 41: !of picking ; 42:J PR[62] 50% FINE ; 43: !move to intermediate waypoint ; 44: !of placing, and trigger {product-viz} ; 45: !project and get planned path in ; 46: !advance ; 47:J P[3] 50% CNT100 DB 10.0mm,CALL MM_S10_SUB ; 48: !move to approach waypoint ; 49: !of placing ; 50:L P[4] 1000mm/sec FINE Tool_Offset,PR[2] ; 51: !move to placing waypoint ; 52:L P[4] 300mm/sec FINE ;l

32: !move to approach waypoint ; 33: !of picking ; 34: J PR[60] 100% CNT100 ; 35: J PR[61] 100% CNT100 ; 36: !move to picking waypoint ; 37: L PR[62] 200mm/sec FINE ; ... 42: !move to departure waypoint ; 43: !of picking ; 44: L PR[63] 500mm/sec CNT100 ; 45: J PR[64] 100% CNT100 ; 46: !Shake ; 47: J PR[65] 100% CNT40 ; 48: J PR[66] 100% CNT40 ; 49: J PR[65] 100% CNT40 ; 50: J PR[66] 100% CNT40 ; 51: !move to intermediate waypoint ; 52: !of placing, and trigger Mech-Viz ; 53: !project and get planned path in ; 54: !advance ; 55: J PR[67] 100% CNT40 ; 56: CALL MM_S10_SUB ; 57: !move to approach waypoint ; 58: !of placing ; 59: J PR[68] 100% FINE ;

In the modified example program, PR[60] stores the enter-bin point, PR[61] stores the approach point for picking, PR[62] stores the pick point, PR[63] stores the retreat point for picking, PR[64] stores the exit-bin point, PR[65] and PR[66] store the shake point, PR[67] stores the exit-bin point, and PR[68] stores the placing point. -

Set the signal for the DO port to perform picking, i.e., to close the gripper and pick the target object. Note that the DO command should be set according to the actual DO port number used on site.

Before modification After modification (example) 37: !add object grasping logic here, ; 38: !such as "DO[1]=ON" ; 39: PAUSE ;

38: !Add object grasping logic here ; 39: DO[107]=ON ; 40: DO[108]=OFF ; 41: WAIT .50(sec) ;

-

Set the DO port to perform placing. Note that the DO command should be set according to the actual DO port number used on site.

Before modification After modification (example) 53: !add object releasing logic here, ; 54: !such as "DO[1]=OFF" ; 55: PAUSE ;

60: !add object releasing logic here, ; 61: !such as "DO[0]=OFF" ; 62: WAIT .50(sec) ; 63: DO[107]=OFF ; 64: DO[108]=ON ; 65: WAIT 1.00(sec) ;

-

Move the robot to the waiting position for picking on the repositioning station.

Before modification After modification (example) 56: !move to departure waypoint ; 57: !of placing ; 58:L P[4] 1000mm/sec FINE Tool_Offset,PR[2] ;

66: !move to departure waypoint ; 67: !of placing ; 68: !Move to HOME2 Position ; 69: J P[3:HOME2] 100% FINE ;

-

| For the logic of modifying the program when the robot picks small parts on the repositioning station, please refer to the above modification instructions. |

Picking Test

To ensure stable production in the actual scenario, the modified example program should be run to perform the picking test with the robot. For detailed instructions, please refer to Test Standard Interface Communication.

Before performing the picking test, please teach the following waypoints.

| Name | Variable | Description |

|---|---|---|

Home position |

P[1] |

The taught initial position in the deep bin. The initial position should be away from the objects to be picked and surrounding devices, and should not block the camera’s field of view. |

Image-capturing position |

P[2] |

The taught image-capturing position. The image-capturing position refers to the position of the robot where the camera captures images. At this position, the robot arm should not block the camera’s FOV. |

Intermediate waypoint |

P[3:HOME2] |

The taught initial position on the repositioning station. The initial position should be away from the objects to be picked and surrounding devices, and should not block the camera’s field of view. |

After teaching, arrange the target objects as shown in the table below, and use the robot to conduct picking tests for all arrangements at a low speed.

The picking tests can be divided into two phases: picking in the bin and picking on the repositioning station:

Phase 1: Bin Picking Test

Since there is no requirement to clear the bin for in-bin picking, only 5 low-speed picking loops need to be performed in actual random picking scenarios.

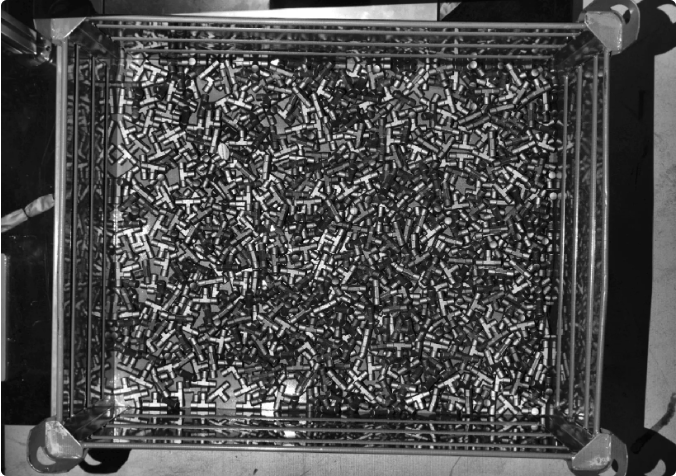

Object placement status |

Illustration |

Similar to the real scenario, the target objects are randomly stacked. |

|

Phase 2: Repositioning Station Picking Test

Arrange the target objects as shown in the following five scenarios, and use the robot to conduct a picking loop for all arrangements at a low speed.

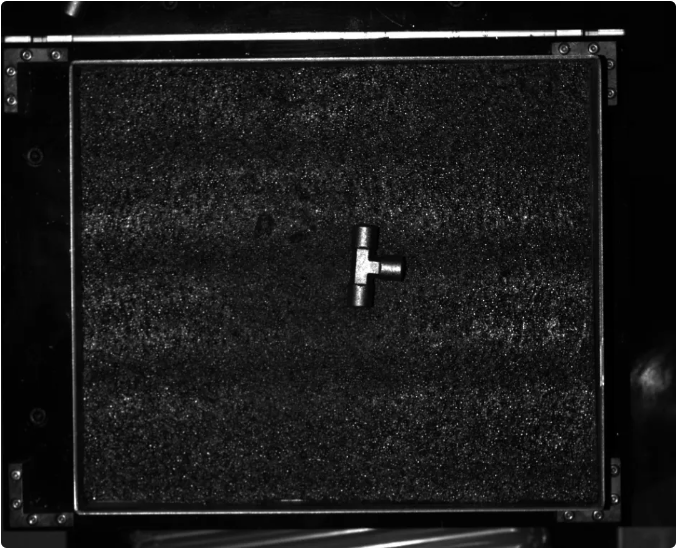

Object placement status |

Illustration |

The target object is placed in the middle of the repositioning station |

|

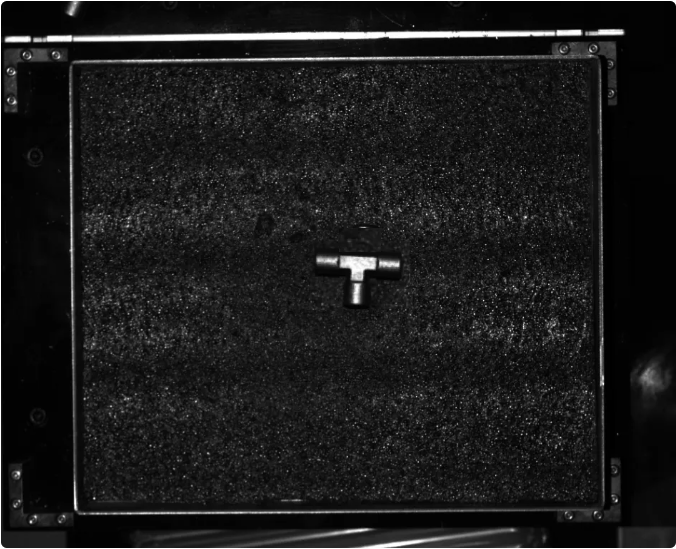

The target object is rotated by 90° |

|

The target object is rotated by 180° |

|

The target object is rotated by 270° |

|

Similar to the real scenario, the target objects are randomly stacked. |

|

If the robot successfully picks the target object(s) in the test scenarios above, the vision system can be considered successfully deployed.