Get Started

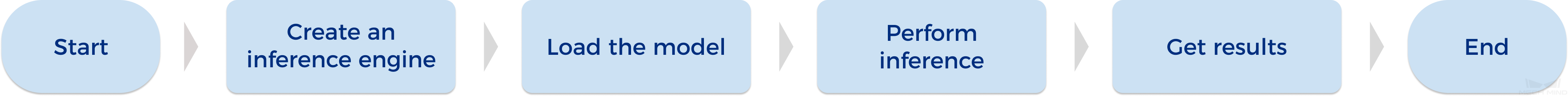

This chapter introduces how to apply Mech-DLK SDK to achieve inference using a defect segmentation model exported from Mech-DLK.

Prerequisites

-

Download and install the Sentinel LDK encryption driver. After installation, ensure that the encryption driver appears in on the IPC.

If you have installed Mech-DLK on your device, you do not need to install the encryption driver again because it is already in place. -

Obtain and manage the software license.

Function Description

In this section, we take the Defect Segmentation model exported from Mech-DLK as an example to show the functions you need to use when using Mech-DLK SDK for model inference.

| Starting from Mech-DLK SDK 3.0.0, C interfaces are no longer supported. The following example contains only C#, C++, and Python. |

Create an Input Image

Call the following function to create an input image.

-

C#

-

C++

-

Python

MMindImage image = new MMindImage();

StatusCode status = image.CreateFromPath("path/to/image.png");

List<MMindImage> images = new List<MMindImage> { image };mmind::dl::MMindImage image;

image.createFromPath("path/to/image.png");

std::vector<mmind::dl::MMindImage> images = {image};import mmind_dl_sdk as mmind

image = mmind.MMindImage()

status = image.create_from_path("path/to/image.png")

images = [image]Create an Inference Engine

Call the following function to create an inference engine.

-

C#

-

C++

-

Python

MMindInferEngine engine = new MMindInferEngine();

engine.Create("path/to/xxx.dlkpack");

StatusCode status = engine.Load();|

In the Mech-DLK SDK C# interface, only the model path parameter is required for |

mmind::dl::MMindInferEngine engine;

engine.create(L"path/to/xxx.dlkpack");

// engine.setInferDeviceType(mmind::dl::InferDeviceType::GPUDefault);

// engine.setDeviceId(0);

// engine.setBatchSize("DefectSegmentation_0", 1);

// engine.setFloatPrecision("DefectSegmentation_0", mmind::dl::PrecisionType::FP32);

engine.load();|

In C++ interfaces, the model parameters can be set according to the actual situation:

|

engine = mmind.MMindInferEngine()

engine.create("path/to/xxx.dlkpack")

status = engine.load()|

In the Python interface, only the model path parameter is needed for |

Deep Learning Engine Inference

Call the function below for deep learning engine inference.

-

C#

-

C++

-

Python

engine.Infer(images);engine.infer(images);status = engine.infer(images)Obtain the Defect Segmentation Result

Call the function below to obtain the defect segmentation result.

-

C#

-

C++

-

Python

List<MMindResult> results;

engine.GetModuleResult("DefectSegmentation_0", out results);std::vector<mmind::dl::MMindResult> results;

engine.getModuleResult("DefectSegmentation_0", results);status, results = engine.get_module_result("DefectSegmentation_0")|

|

Visualize Result

Call the function below to visualize the model inference result.

-

C#

-

C++

-

Python

engine.ResultVisualization(images);

images[0].Show("result");engine.resultVisualization(images);

images[0].show("result");status, vis_images = engine.result_visualization(images)

vis_images[0].show("result")